SEO

Join 500+ brands growing with Passionfruit!

Original data and proprietary research are the single highest-leverage intervention available for earning AI citations at scale, because they produce extractable, attributable, unique statistics that AI engines cite at 55 to 120 percent higher rates than synthesized thought leadership on the same topic. In practical terms, a B2B content team that replaces one long-form opinion piece per month with one original research brief can move its AI citation rate from the 6 to 15 percent range that characterizes most branded content into the 38 to 65 percent range that characterizes data-first content, according to ZipTie.dev's analysis of citation patterns across ChatGPT, Perplexity, and Google AI Overviews.

That shift is larger than anything schema, internal linking, or even entity optimization can deliver on their own, and the data supporting it has grown unusually consistent over the last twelve months. Whitehat SEO's 2026 analysis found that content with original statistics is 3.7 times more likely to be cited than otherwise-comparable content without them. GenOptima's internal tracking across ChatGPT, Perplexity, and Copilot showed that content sections with three or more statistics per 300 words achieve 2.1 times higher citation frequency than sections with zero statistics, while Backlinko's broader analysis found that articles containing proprietary statistics earn 283 percent more backlinks than articles without them, which then feeds back into stronger AI citation rates through downstream authority signals.

Despite this, research from SurveyMonkey shows that only 39 percent of marketers published original research in the prior twelve months, even though 94 percent believed it could elevate brand authority in their industry, which is one of the widest capability-to-investment gaps currently visible anywhere in content strategy. This piece is the production-side companion to our guide on topical authority clusters, because where that piece covers the content architecture that earns citations, this piece covers the single content input that earns them fastest once the architecture is in place.

Why Does Original Research Earn AI Citations So Aggressively?

AI engines do not cite random content, and the retrieval pipelines inside ChatGPT, Perplexity, Gemini, and Google AI Overviews all share a preference for sources that carry verifiable, attributable, specific claims. Three structural characteristics make original research uniquely suited to those preferences, and understanding each one in isolation makes the production workflow considerably easier to plan.

It creates citable claims that exist nowhere else

When AI engines synthesize an answer, they prefer to cite the primary source of a claim rather than a secondary source quoting it, because citing the primary source reduces hallucination risk and improves factual grounding. If you published "40 percent of B2B buyers start their vendor research inside ChatGPT" as part of your own survey, every downstream article referencing that statistic will cite your study as the origin, and AI engines extracting the stat from those downstream articles will still attribute the claim back to your research. This compounding attribution effect is why Google's WIPO patent on passage ranking (WO2024064249A1) explicitly names information density and specificity as factors in passage selection, because the patent captures a retrieval behavior that has become standard across every major AI platform.

It carries strong grounding signals

AI engines score sources partly on how verifiable their claims are, and a source that publishes methodology, sample size, collection dates, and analysis approach scores substantially higher on grounding than a source that asserts claims without support. This is why academic research consistently gets cited at higher rates than commercial content even when the commercial content ranks better on traditional SEO metrics, and it is why your methodology section is not an afterthought but a core citation-earning component of the research. Our generative engine optimization guide covers the broader grounding mechanics, and for original research specifically the methodology block does more work than any other part of the page.

It resets the freshness clock in a category

AI engines have a strong recency bias, and citations tend to decline sharply once content is more than 90 days old, which means that in any given category the most-cited statistics are typically the most recently published ones. Original research lets you reset the freshness clock on the key statistics that define your category, because the moment your study publishes, AI engines start citing it in place of older data even when that older data came from more authoritative domains. This dynamic is why repeat annual studies compound so aggressively over time, and it is the single most underweighted strategic reason to invest in original research rather than better synthesis of existing research.

What Actually Counts as Original Research?

Not every proprietary data asset needs a 20-page PDF and a six-figure research budget, and teams that assume it does consistently underinvest in research formats that would earn citations faster and cheaper than a flagship annual study. There are five distinct tiers of original research that AI engines cite at meaningfully different rates, and a well-designed research program typically combines several of them across the calendar year.

First-party usage data is the fastest and cheapest tier, and it covers anything you can derive from your own product, platform, tools, or client work, such as aggregated analytics patterns, conversion rate benchmarks by industry, average deal velocity across your customer base, or anonymized A/B test outcomes. Stripe's payment trend reports, HubSpot's marketing benchmarks, and Shopify's retail pulse updates all fall into this tier, and for any SaaS company the first-party data tier should be the default quarterly research format because the data already exists and only needs to be analyzed and packaged.

Survey-based research is the most commonly understood format and covers anything you collect through a structured questionnaire delivered to a defined audience, typically via panel providers like Centiment, Pollfish, or Dynata at roughly $15 to $50 per completed response, or via your own email list at significantly lower cost with 10 to 20 percent completion rates. Surveys work especially well for capturing beliefs, opinions, and self-reported behavior, and SurveyMonkey's analysis found that 66 percent of readers consider 1,000 or more respondents the minimum credible sample size for press-worthy research, which sets the practical scale target for anything intended for broad media pickup.

Aggregated public-data analysis is the most underused tier and covers taking data that exists in public-facing but scattered form and combining it into a unique analytical view, such as parsing thousands of public filings, scraping category-level pricing, aggregating salary data from Glassdoor and Payscale, or analyzing citation patterns across public AI platform outputs. Orbit Media's marketing salary survey and countless SaaS pricing trend reports work this way, and this tier scales particularly well because the underlying data collection can often be automated once the initial methodology is set.

Expert interviews and qualitative research is the tier that produces the highest per-citation impact on thought leadership queries but the lowest raw citation volume, because interview-based research tends to surface directional insight rather than extractable statistics. Fifty to one hundred phone or video interviews with named experts in your category can produce content that AI engines cite heavily when users ask opinion or strategy questions, and this tier also builds relationships with industry voices who then amplify the resulting study.

Experimental and benchmark studies are the tier with the strongest technical credibility and cover anything where you run the same test methodology across a defined set of sources or conditions and report the comparative outcomes. Our own brand visibility audit methodology is an example of this tier, because it scores brands using a fixed 30-prompt protocol across four AI platforms and produces comparable citation data that can be reused as research content in its own right.

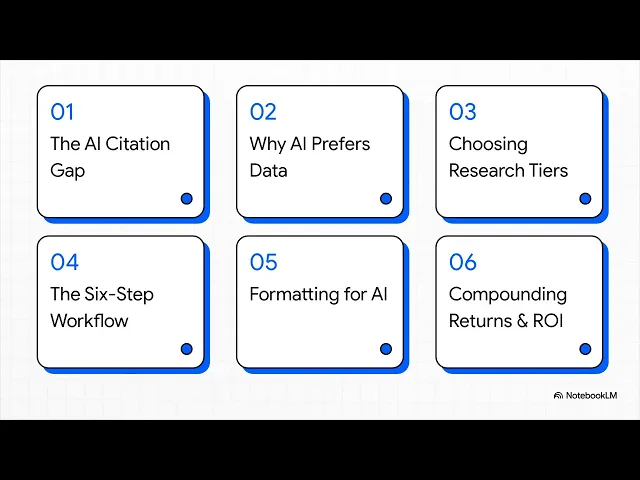

The 6-Step Workflow to Produce Research That AI Engines Cite

Every successful research program follows roughly the same production workflow, and working through the six steps in order prevents the most common execution failures that kill research ROI before the study even reaches publication.

Step 1: Pick a research question that creates a missing statistic

The most citation-efficient research questions are ones where the industry has a commonly-asserted claim but no credible underlying data, and identifying these "missing statistic" gaps is the single highest-leverage part of the workflow. If your category repeats a stat that traces back to a ten-year-old study, or to a vendor blog that cites another vendor blog, or to no original source at all, you have found a research opportunity where a well-executed study will immediately become the authoritative citation for that claim. Ascend2 and CoSchedule have both built substantial citation franchises on this pattern, and the pattern replicates cleanly in any category where assertion outpaces evidence.

Step 2: Design methodology transparently before collection

Your methodology section should be drafted before you collect any data, because the discipline of writing out sample size, recruitment approach, screening criteria, collection dates, and known limitations forces the design decisions that produce credible numbers. Methodology transparency is non-negotiable for AI citation eligibility, because AI engines explicitly weight transparency signals when deciding which source to cite among competing claims, and a study without a methodology section will consistently lose citation share to a competing study with one even when the underlying data is stronger.

Step 3: Collect data at a scale that matches your intended use

Sample size minimums shift with your intended audience, and a niche B2B study targeting your existing email list can produce credible research at 500 responses, while a study you intend to pitch to mainstream media needs 1,000 or more to clear the credibility threshold that journalists and analysts expect. Collection channels should be documented transparently, because mixing an email list with panel respondents without disclosure is one of the fastest ways to get a study dismissed by more sophisticated readers, which then filters through to AI engines that pick up on methodology critiques.

Step 4: Analyze for the story, not for the headline

Raw data rarely tells a story in the form that makes a citation-worthy publication, and the analysis phase is where teams translate numerical findings into the specific statistical claims that will travel. Good analysis segments the data, tests multiple cross-tabulations, and identifies the two or three headline statistics that capture the most interesting surprise in the results, because AI engines preferentially cite statistics that are specific, counterintuitive, and numerically precise rather than statistics that confirm what everyone already believed. The rule of thumb is that any statistic readers can guess in advance is not a citation-worthy statistic.

Step 5: Format the published research for AI extraction

A published research brief should follow the same on-page patterns as any other citation-optimized content, which means definition-first paragraphs, question-format headings, extractable statistics in the first 100 words of each section, and tables for comparative data. Our AI-friendly schema markup playbook covers the schema layer, and for research specifically you should deploy Article, Dataset (if publishing the raw data), and FAQPage schemas in combination to maximize retrieval surface area. Data visualizations should be accompanied by descriptive alt text and paragraph-level interpretation in the body copy, because AI engines extract claims from prose rather than from embedded images.

Step 6: Distribute into the citation sources your category actually uses

Publishing the research on your own domain is necessary but not sufficient, because AI engines build citation-worthiness partly from where else a research asset is referenced. The distribution workflow should include pitching the research to trade publications for editorial coverage, filing Wikipedia citations where the topic is notable enough, seeding the key statistics into relevant Reddit and industry community discussions through genuine participation, and repackaging the findings into YouTube or podcast content where category creators operate. Our B2C SaaS AI search strategy piece covers how the distribution mix shifts across B2B and B2C segments, because the right distribution surface for your research depends heavily on where your buyers actually research.

How to Format a Research Brief for Maximum Citation Pickup

A research brief has a narrow set of structural conventions that consistently outperform alternative formats in AI citation testing, and following the template tightens the content without reducing its credibility.

The first 100 words should state the single most important finding as a one-sentence statistic with its methodology anchor, such as "In a survey of 1,200 B2B marketing leaders conducted in March 2026, 47 percent reported that at least one-third of their inbound pipeline now originates from AI-assisted research sessions." This opening paragraph is the single most-cited part of any research brief, so it should contain the headline number, the population, the sample size, and the collection timeframe in a form an AI engine can extract in one chunk.

The body should organize findings around four to six thematic sections, each anchored by its own question-format heading and its own definition-first opening sentence that states the section's key finding. Each section should include one supporting statistic, one data visualization, and one interpretive paragraph that explains what the finding means for the reader's decisions, because the combination of these three elements gives AI engines multiple extractable passages per section.

The methodology section belongs near the end of the brief but should be comprehensive enough to stand as its own citable asset, including sample composition, recruitment sources, screening criteria, collection dates, weighting approach if applied, and known limitations stated honestly. Teams that hide methodology in a footer or behind a download form consistently earn fewer citations than teams that publish full methodology in on-page HTML, because the methodology itself is a retrieval target for AI engines assessing source credibility.

Build a Compounding Research Program, Not One-Off Studies

Individual research reports earn citation spikes that decay over roughly 90 to 180 days, while compounding research programs earn citation trajectories that climb year over year, and the difference is almost entirely a function of whether the same research is repeated with a consistent methodology on a predictable cadence.

An annual State of Category study is the simplest compounding format, because it generates year-over-year trend data that no one-off study can match, and the combination of current-year statistics plus trend lines across multiple years becomes increasingly citable as the dataset deepens. Content Marketing Institute's annual B2B benchmarks, HubSpot's annual State of Marketing, and Edelman's Trust Barometer all work this way, and each has become the default citation source for its category partly because the trend data is impossible to replicate without running the research for the same number of years.

Quarterly updates on a smaller dataset complement the annual flagship and cover the 90-day freshness gap that AI engines increasingly penalize, because a category where the annual study is eight months old is a category where a fresh quarterly update will capture most of the citation share for statistics that feel time-sensitive. The practical cadence that works for most B2B teams is one annual flagship plus three quarterly updates on a subset of the same methodology, which produces four research publications per year at roughly the cost of one flagship study plus one additional analyst's time.

How to Measure the Citation Return on Research Investment

Measurement for research investment looks different from measurement for general content, because the value of a research asset should be tracked at the statistic level rather than at the page level. Each headline statistic from a study should be monitored as its own citation entity across ChatGPT, Perplexity, Gemini, and Google AI Overviews, and our step-by-step guide to tracking AI and LLM traffic separately in GA4 covers the referral attribution side of the measurement stack.

At the citation-level, track four metrics for each major statistic published. Citation frequency is the count of AI responses that include the statistic over a rolling 30-day window. Citation attribution rate is the percentage of those responses that correctly attribute the statistic to your brand rather than citing a downstream source that picked it up. Backlink velocity is the rate at which other domains reference the statistic over the first 90 days post-publication. Search volume on queries that contain the statistic's core claim is a lagging indicator that captures downstream awareness beyond AI citations alone. Together these four metrics tell you whether each research investment is compounding, plateauing, or decaying, and they give you the input data to decide which research topics deserve an annual repeat versus a one-time publication.

Make Research the Core of Your Content Engine, Not a Side Project

Most content programs treat original research as an annual splurge, something the team runs once a year as a flagship and then returns to synthesis-heavy blog posts for the remaining 11 months, and this pattern produces exactly the results you would expect from that investment curve. The teams earning AI citations at genuinely compounding rates in 2026 have rebuilt their content calendars around a continuous research cadence, where every quarter produces at least one original data asset, every month produces at least one derivative piece built from that quarter's data, and every piece of synthesis content cites at least one proprietary statistic from the team's own dataset rather than recycling external stats.

The economics of this rebalance work in favor of almost any category, because the citation premium on original research is large enough that a single well-executed study typically earns more citations than 10 or 15 synthesis pieces published in the same window, and those citations then compound into domain-level authority signals that lift every future piece of content on the same site. If you want to diagnose where your team's current citation profile sits and which research topics would earn the most immediate lift, start with a brand visibility audit across ChatGPT, Perplexity, and Gemini to identify the category questions your brand is currently invisible on, because those gaps are almost always the research topics where a single study will produce the fastest citation return.

Frequently Asked Questions

How much does it cost to produce research that earns AI citations at scale?

Survey-based research using panel providers typically costs between $7,500 and $25,000 for a study with 500 to 1,000 responses, while first-party data research can be produced at near-zero marginal cost because the underlying data already exists inside your analytics or product systems. A well-resourced research program for a B2B SaaS company typically runs $30,000 to $80,000 per year when combining one annual flagship study with three quarterly updates, and the ROI tends to clear that investment within 6 to 12 months as citations compound.

How large does a sample need to be to earn credible AI citations?

The practical minimum is roughly 500 respondents for niche B2B research targeting a specific decision-maker profile, and roughly 1,000 respondents for consumer research or for research intended for mainstream media pickup. AI engines do not explicitly weight sample size in their retrieval decisions, but they do weight downstream citation patterns, and downstream publishers apply their own sample-size credibility thresholds that filter through to which studies ultimately earn attribution.

Is it possible to earn AI citations without running surveys or conducting primary research?

Yes, through aggregated public-data analysis, which involves combining existing datasets in ways that produce genuinely new analytical views even when the underlying data is not proprietary. Examples include scraping public pricing pages to build category-level pricing benchmarks, aggregating salary data across multiple sources to produce a more granular view than any single source offers, or running experimental tests across a defined set of public websites. Aggregated-data research earns citations at rates comparable to survey research when the methodology and analytical framing are genuinely novel.

How long does it take for a published research asset to start earning AI citations?

Initial citations typically appear within 2 to 4 weeks of publication when the research is distributed through trade publications, Reddit communities, and industry newsletters in parallel to on-site publication, and full citation momentum usually develops over 60 to 90 days as downstream references accumulate. Research that is only published on the researcher's own domain without active distribution often takes 3 to 6 months to reach the same citation levels, which is why the distribution step is not optional.

Should proprietary research live behind a lead-capture form or on an open-access page?

On-page, open-access HTML consistently earns more AI citations than gated PDF downloads, because AI engines cannot index content behind form walls and most of the downstream publishers who would cite your research will not bother to fill out a form to access it. The effective pattern is to publish the full research on an open-access page with the raw PDF available as an optional download, which captures both the citation-earning benefit of open access and the lead-capture benefit for readers who want the polished asset.

What is the single most common mistake teams make when producing research?

Leading with a pre-decided narrative instead of with a hypothesis, which produces research that confirms what the team already believed and therefore contains no surprising statistics worth citing. AI engines consistently cite counterintuitive, specific, and precisely-numbered findings over findings that restate conventional wisdom, so research that starts from "what is the actual data here" tends to earn far more citations than research that starts from "how do we produce numbers that support our positioning."