SEO

Join 500+ brands growing with Passionfruit!

The GEO tools market went from 7 products to 150+ in 10 months. Over $200 million in VC funding poured into AI visibility tracking. SparkToro estimates $100 million per year is already being spent on AI search analytics.

Every tool does the same thing: show you a dashboard.

Here is the problem. Dashboards do not fix anything. You open your AI visibility platform, see that your brand is invisible on 200 prompts where competitors appear 60-70% of the time, and then what? You close the dashboard. You add it to the backlog. Nothing changes.

This is not a monitoring problem. It is an execution problem. And it is the reason most brands spending money on AI visibility tools are not seeing proportional results.

The industry frames this as a single gap between monitoring and execution. It is actually two gaps that compound: monitoring data without prioritized action, and prioritized action without scalable execution. Closing only one half does not produce outcomes. You need a system that closes both.

Our guide breaks down why the monitoring-only approach fails, what execution actually looks like when it works, and how to build the operational loop that turns AI visibility data into measurable results. For the full framework on optimizing content for AI citation, see our GEO guide.

The AI visibility monitoring market is saturated and still not solving the problem

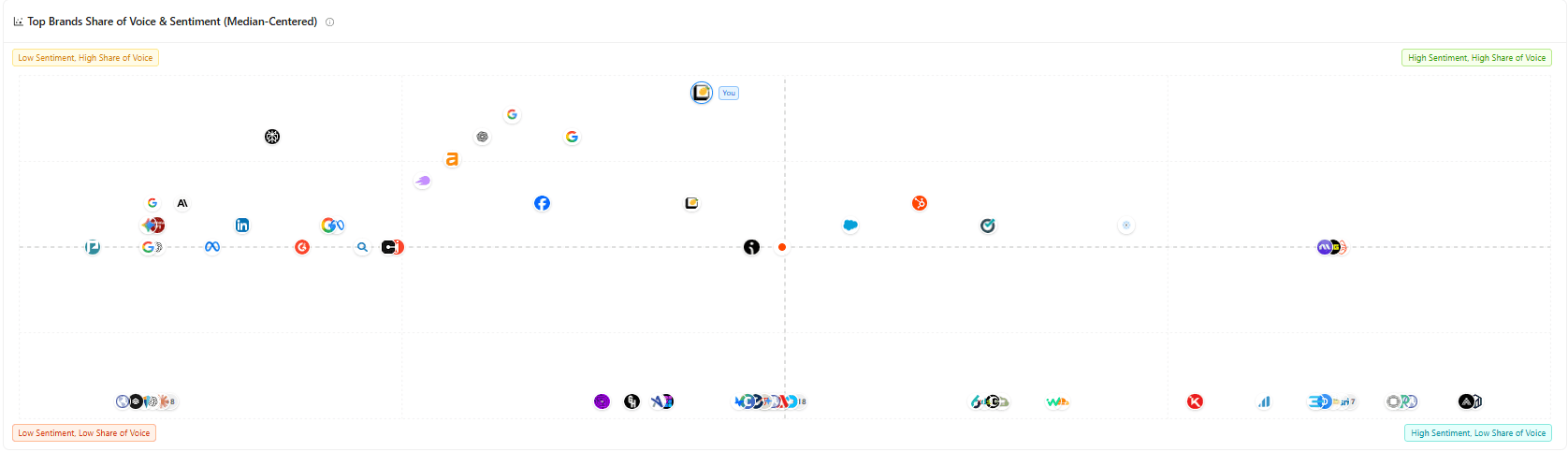

There are now dozens of tools that track your brand across ChatGPT, Perplexity, Gemini, Google AI Overviews, and Claude. The dashboards look polished. The data visualizations are impressive. The competitive benchmarking charts tell you exactly where you stand relative to every competitor in your category.

And the results do not follow.

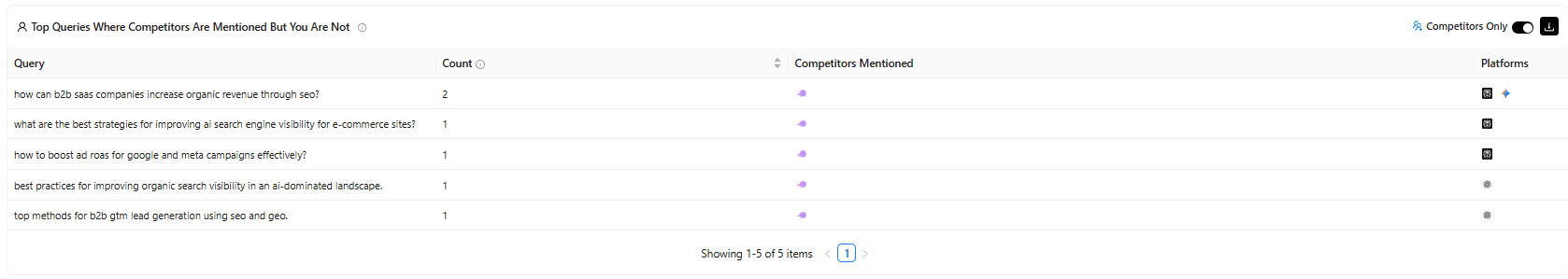

The core issue is structural. Monitoring tells you where you are invisible, but it does not tell you which gaps matter most for your business or how to close them. A dashboard showing you are absent from 200 category-relevant prompts gives you awareness. It does not give you a plan.

The data itself adds another layer of difficulty. SparkToro and Gumshoe.ai ran the most comprehensive public study on AI recommendation consistency in January 2026: 600 volunteers, 2,961 prompts, across ChatGPT, Claude, and Google AI. The finding that should make every monitoring-tool buyer pause: there is less than a 1% chance that ChatGPT returns the same brand list twice for the same prompt (SparkToro, January 2026). Ranking position in AI responses is essentially random. Only visibility rate (how often you appear across many runs) produces a stable, trackable signal.

When you combine noisy data with no execution pathway, you get an expensive dashboard and no outcomes. This is where most brands are stuck. For our full analysis of the SparkToro study, see our research breakdown.

Why dashboards without prioritization waste your team's time

The typical AI visibility report presents 200 prompts where your brand does not appear. A marketing team cannot act on 200 gaps simultaneously. Without a framework that ranks gaps by business impact, teams either pick randomly (wasting effort on low-value prompts) or get paralyzed and do nothing.

This is the first half of the gap: monitoring data without prioritized action.

The framework that works scores every visibility gap across four dimensions:

Dimension | What it measures | Why it matters |

|---|---|---|

Revenue potential | Does this prompt category drive purchasing decisions? | A prompt like "best CRM for agencies" has direct revenue impact. "What is a CRM" does not. |

Competitive density | How many competitors appear and how often? | If 4 competitors appear in 70%+ of responses and you appear in 0%, this gap is actively losing you deals. |

Fix feasibility | Can you create content for this in a week, or does it need a 3-month PR campaign? | Quick wins build momentum. Long-term plays need different resourcing. |

Current asset proximity | Do you have existing content close to being cited, or must you create from scratch? | A page ranking on page 2 for a related keyword is a fast optimization target. A topic with no existing content requires full production. |

When you score gaps across all four dimensions, the answer becomes obvious: "These 5 prompts will have the most business impact if you close them this month." Not "here are 200 gaps sorted alphabetically."

Most monitoring tools do not provide this scoring because they do not connect to your revenue data. They show visibility metrics in isolation. The brands seeing results are the ones connecting monitoring data to business outcomes before deciding where to invest execution resources.

The five execution levers that actually change AI visibility

Knowing your top 5 gaps is the prioritization problem solved. Closing those gaps is the execution problem. Here are the five specific levers that move AI visibility, each backed by data.

1. Build content clusters targeting the prompts where you are invisible

ChatGPT uses Reciprocal Rank Fusion (RRF) when retrieving live information via RAG. Pages that appear repeatedly across multiple related search results get prioritized over pages that rank well for a single query. Content clusters naturally produce this repeated appearance. If you rank for 15 variations of questions around your core topic, you show up in more of ChatGPT's fan-out sub-queries, and RRF compounds that visibility.

This is the highest-leverage execution move for most brands because it simultaneously improves traditional SEO and AI citation. For how to build clusters, see our topic clusters guide.

2. Earn coverage on the publications AI trusts most

Ahrefs found that 65.3% of ChatGPT's most-cited pages come from domains with a Domain Rating above 80. Muck Rack's analysis of over 1 million AI prompts found 85% of non-paid AI citations come from earned media, not owned content. The Fullintel-UConn study found 47% of all AI citations come from journalistic sources.

Your own blog helps with traditional SEO. For AI visibility, editorial coverage in high-authority publications is the stronger lever. Product reviews, expert roundups, original research citations, and data-driven stories in publications AI engines already trust move visibility faster than any amount of on-site optimization.

Ahrefs also found that branded web mentions correlated 0.664 with AI visibility, significantly higher than branded anchors (0.527), domain rating (0.326), or backlinks (0.218). Being talked about matters more than being linked to.

3. Refresh your most important content every 30 days

Content freshness directly affects AI citation rates. Practitioners tracking AI visibility report that content updated within 30 days receives 3.2x more AI citations than stale content. AI models prioritize recent, accurate information when constructing answers.

Add visible "last updated" dates, refresh statistics, include new examples, and ensure every page reflects current information. This is not a one-time optimization; it is an ongoing operational discipline. For how content age affects both traditional and AI rankings, see our content decay guide.

4. Structure content for AI extraction

AI systems extract specific passages from your content to construct their answers. The Princeton/Georgia Tech GEO research paper found that content with statistics, citations, and structured evidence boosts AI visibility by up to 40% (Aggarwal et al., ACM SIGKDD 2024). FAQ schema implementations deliver a 3.7x citation lift in practice.

Write direct 30-40 word answers immediately after each H2 heading. Add FAQ schema markup. Include specific numbers, named entities, and real examples. Make every section standalone so it can be extracted and cited independently. The goal is to make your content the easiest possible source for an AI to quote accurately.

5. Build entity authority through consistent brand presence

The Authoritas 2026 study tracking 143 recognized digital marketing experts found that the top 10 captured 59.5% of all AI citability by February 2026, up from 30.9% just two months earlier. Concentration is accelerating. The brands with consistent presence across the sources AI engines trust (editorial publications, review platforms, industry forums, structured data) compound their advantage over time.

Entity authority is not a quick fix. It is the long-term moat. Brands that invest in it now will be difficult for competitors to displace as AI search continues to grow.

How to structure a monitoring-to-execution system that produces measurable results

The five levers are the "what." The system is the "how." Most teams fail not because they do not know what to do but because they have no operational loop connecting monitoring data to execution decisions to measured outcomes.

The system has three layers:

Monitoring layer: Track visibility rate across all major AI platforms. Segment by prompt intent (awareness, consideration, decision). Benchmark against 3-5 direct competitors. Use a tool that connects visibility data to revenue: Passionfruit Labs connects directly to GA4 so you can see which AI channels drive sessions, conversions, and dollars. If you already have Ahrefs or Semrush, their AI tracking features provide solid visibility data but without the revenue connection.

Prioritization layer: Score every visibility gap using the four-dimension framework (revenue potential, competitive density, fix feasibility, asset proximity). The top 5-10 gaps get resourced each month. Everything else waits. This is where most teams get stuck because scoring requires combining AI monitoring data with business data that lives in GA4, CRM, or revenue systems. Tools that connect these data sources save weeks of manual analysis.

Execution layer: For each prioritized gap, assign the right lever. A gap where you have existing content ranking on page 2 for a related keyword gets content optimization (lever 1 or 3). A gap where you have no content and need authority gets earned media (lever 2). A gap where you have content but it is not structured for AI extraction gets technical optimization (lever 4). Set execution timelines. Measure results in the monitoring layer 30-60 days later. Feed results back into prioritization.

The loop is: Monitor > Prioritize > Execute > Measure > Reprioritize. Every 30-60 days, you learn which levers worked for which types of gaps, and the next cycle gets more efficient.

What AI agents can and cannot do in this system

The Writesonic article makes a fair point: marketers using Claude Code with the right skill packs are running SEO audits in 90 minutes that used to take 8 hours. AI agents are genuinely powerful for individual execution tasks. A solo marketer can rewrite meta descriptions, generate FAQ schema, optimize page structure for AI citation, and produce first-draft content faster than ever.

But there is a difference between a freelancer and a department.

AI agents today cannot reliably run across 500 pages in parallel with error recovery. They cannot coordinate a team reviewing and approving content before it goes live. They cannot maintain state across weeks of work (what was approved, what was rejected, what was published, what improved). They cannot connect visibility improvements to revenue attribution. And they cannot make the judgment calls about which gaps matter most for a specific business, because that requires context about your market, your competitors, your revenue model, and your existing content assets.

The best system uses agents for execution speed and humans for strategic direction. Agents rewrite the page. Humans decide which page matters most. Agents generate FAQ schema for 50 pages overnight. Humans decide which 50 pages are worth the investment based on revenue data.

For how to use Claude effectively for marketing execution, see our Claude for marketing guide and MCP connectors guide.

How Passionfruit closes both halves of the gap

The gap has two halves. Most companies in this space close one.

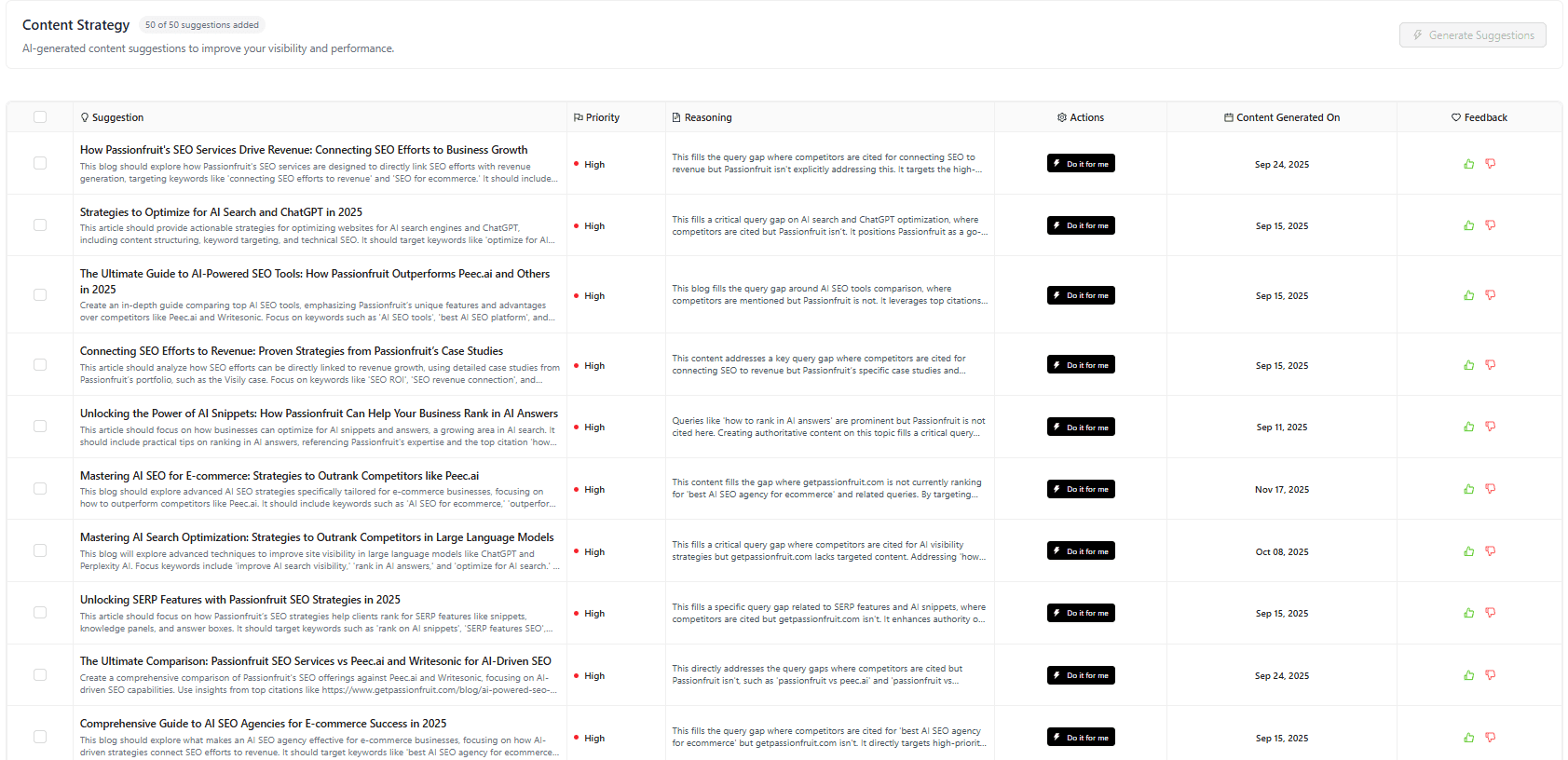

Tool vendors close the monitoring-to-prioritization half. They track mentions, surface gaps, and some generate recommendations. But the recommendations sit in a dashboard until someone on your team has time to act on them. For most marketing teams, that time never comes.

Agencies and managed services close the prioritization-to-execution half. They create content, earn media placements, and implement technical optimizations. But without integrated monitoring, they cannot prove what worked or continuously reprioritize based on results.

Passionfruit Labs (the product) closes the first half. It tracks visibility across up to 9 AI platforms (ChatGPT, Perplexity, Gemini, Claude, Google AI Overviews, AI Mode, Grok, Meta AI, Copilot, and DeepSeek on enterprise plans), connects to GA4 for revenue attribution, surfaces the specific prompts where you are invisible alongside competitors, and generates prioritized action plans sorted by business impact rather than alphabetically. Plans start at $19/month with a 7-day free trial.

Passionfruit's GEO services team closes the second half. They create citation-optimized content, build earned media coverage on high-authority publications, implement schema and structured data, run 30-day content refresh cycles, and measure results through the same monitoring platform. The monitoring data proves what worked. The services team acts on what the data reveals. The results feed back into the next monitoring cycle.

Neither monitoring nor execution alone produces results. The system that connects both is what changes visibility. See our case studies for measured outcomes.

Final thoughts

The GEO market has a $200 million monitoring infrastructure and a massive execution gap. The tools got funded. The dashboards got built. The visibility data got tracked. And most brands are still invisible in the AI answers that drive purchasing decisions.

The fix is not a better dashboard. It is a system that connects monitoring data to prioritized action, action to measurable execution, and execution results back to the next monitoring cycle. The brands closing both halves of that loop are the ones compounding AI visibility while everyone else refreshes their dashboard.

Start by auditing your current visibility with Passionfruit Labs (7-day free trial). If the data reveals gaps you need help closing, talk to Passionfruit's GEO team about building the execution system that turns those gaps into results.

Frequently asked questions

What is the difference between AI visibility monitoring and AI visibility execution?

Monitoring tracks whether your brand appears in AI-generated answers across platforms like ChatGPT, Perplexity, and Google AI Overviews. It tells you where you are visible, where you are invisible, and how you compare to competitors. Execution is the work of closing those visibility gaps: creating content, earning media coverage, implementing structured data, refreshing existing pages, and building entity authority. Monitoring without execution produces dashboards. Execution without monitoring produces untracked effort. You need both connected in a feedback loop.

Why do AI visibility dashboards alone not improve results?

Dashboards show you 200 prompts where you do not appear. Without a prioritization framework that scores each gap by revenue potential, competitive density, fix feasibility, and asset proximity, your team cannot determine which 5 gaps to close first. Most tools present gaps without connecting them to business impact, which means teams either act randomly or do nothing. The brands seeing results are the ones that score gaps by business value before committing execution resources.

What tools connect AI monitoring to execution?

Most AI visibility tools focus on monitoring only. Passionfruit Labs connects monitoring to revenue attribution via GA4 and generates prioritized action plans. Writesonic combines monitoring with content generation through its Action Center. AirOps connects visibility signals to content operations at scale. Ahrefs Brand Radar and Semrush AI Toolkit provide strong monitoring integrated with traditional SEO tools but require separate execution workflows. The key evaluation question for any tool: does it help you fix the problem or just report on it?

How long does it take to improve AI visibility through execution?

Results vary by lever. Content optimization on existing pages that already rank for related keywords can show visibility improvements within 2-4 weeks as AI models re-retrieve updated content via RAG. Earned media on high-authority publications can generate citations within days to weeks of publication. Content freshness improvements (30-day refresh cycles) compound over 60-90 days. Entity authority building is a longer-term play that typically shows measurable concentration improvements over 3-6 months. Most teams see initial movement within 30-60 days of starting a prioritized execution program.

Can AI agents replace human execution for GEO?

For individual tasks, yes. AI agents excel at rewriting meta descriptions, generating FAQ schema, restructuring pages for AI extraction, and producing first-draft content. For system-level execution, no. Agents cannot prioritize which gaps matter most for your specific business, coordinate multi-person review workflows, maintain state across weeks of campaign execution, or make judgment calls about editorial quality and brand voice. The best approach uses agents for execution speed and humans for strategic direction and quality control.

How do you prioritize which AI visibility gaps to fix first?

Score every gap across four dimensions: revenue potential (does this prompt drive purchasing decisions?), competitive density (how many competitors appear and how often?), fix feasibility (quick content optimization or multi-month PR campaign?), and current asset proximity (do you have content close to being cited or must you start from scratch?). The gaps scoring highest across all four get resourced first. This scoring requires connecting AI monitoring data with business data from GA4 or CRM, which is why tools with revenue attribution (like Passionfruit Labs) produce better prioritization than monitoring-only platforms.

What ROI should marketing teams expect from AI visibility optimization?

Agency data suggests GEO services return $3.71 for every dollar spent when paired with proper monitoring and execution frameworks. The ROI compounds: AI visibility concentration is accelerating, with the Authoritas 2026 study showing the top 10 entities in a category captured 59.5% of all AI citability by February 2026, up from 30.9% two months earlier. Brands that establish visibility early compound an advantage that becomes increasingly expensive for later entrants to overcome. The key is connecting visibility improvements to revenue attribution so you can measure the return, not just the mention count.