SEO

Join 500+ brands growing with Passionfruit!

Microsoft's Bing team published a framework on May 6, 2026 explaining how indexing for AI-generated answers differs from indexing for traditional search. The post identifies five measurement areas where grounding diverges from ranking, names "abstention" as a design choice for AI retrieval, and describes iterative retrieval as a separate engineering problem. Co-authored by Krishna Madhavan, Knut Risvik, and Meenaz Merchant from Microsoft AI, it is the most technically detailed public statement Microsoft has made about how its index handles AI grounding. For publishers and brands trying to win AI citations, the framework reveals exactly what Bing's system needs from a page before that page can be used as evidence. Here is what grounding means, how it differs from search, and what to optimize before competitors close the gap.

What is grounding in Bing's AI search?

Grounding is the process by which an AI system pulls evidence from indexed web content to construct an answer it can defend. Microsoft frames the contrast plainly: search indexing was built to help humans decide what to read, and grounding indexing is being built to help AI systems decide what to say. The same crawling and quality infrastructure powers both, but the optimization targets diverge once the system stops linking to pages and starts producing answers.

The practical consequence is that Bing's grounding layer does not retrieve whole pages. The system breaks content into smaller chunks, evaluates each chunk for evidence quality, and decides whether the available material is strong enough to support a specific claim. If the evidence is missing, stale, or conflicting, the system can choose not to answer at all. That single design choice, called abstention in Microsoft's framework, is one of the largest behavioral differences between Bing's traditional search index and its grounding layer. Aligning content for AI search systems requires understanding chunk-level evaluation, not just page-level ranking.

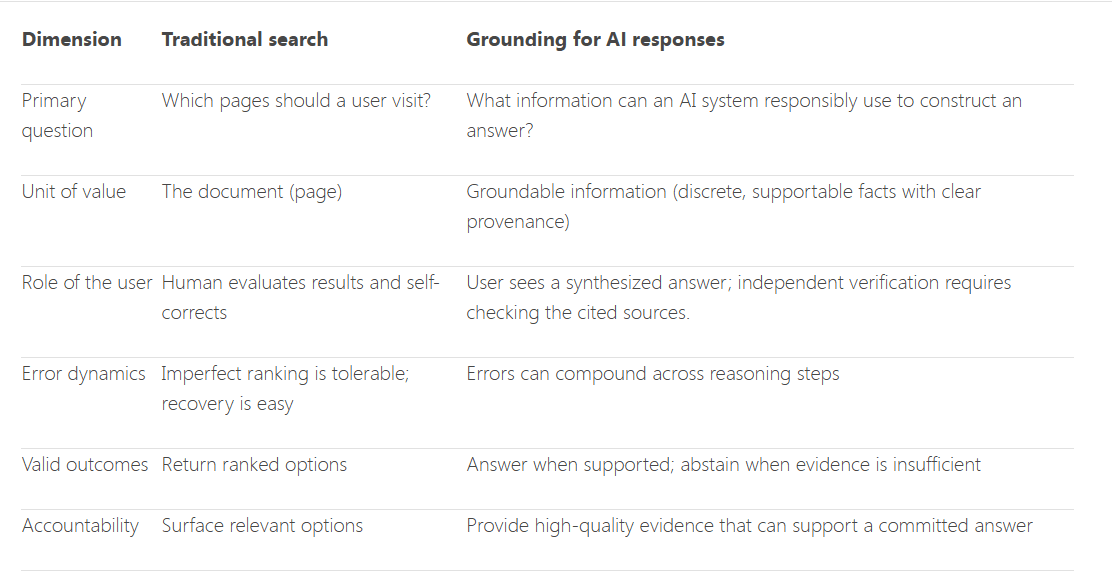

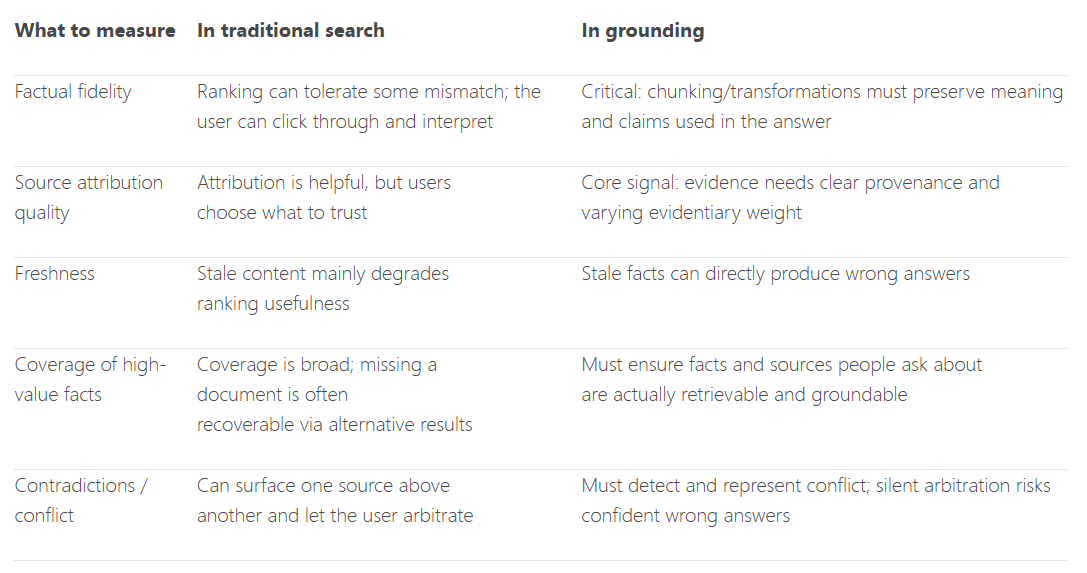

How grounding indexing differs from traditional search indexing

Microsoft identifies five measurement areas where grounding diverges from search. Each shifts how a page earns its place in an AI answer rather than its place on a results page.

Measurement Area | Traditional Search | Grounding Indexing |

|---|---|---|

Factual fidelity | Some chunk distortion is tolerable; user can click through | Chunk distortion can produce wrong answers silently |

Source attribution quality | Helpful for context | Core signal that decides which sources carry evidence weight |

Freshness | Stale content is a ranking problem | Stale facts produce misleading AI responses |

Coverage of high-value facts | Missing one document is a recoverable gap | Specific facts must be available and groundable |

Contradiction handling | Surface multiple sources, let user decide | System must reconcile or abstain |

Source: Microsoft Bing's blog post on grounding.

Factual fidelity

Factual fidelity asks whether the indexed representation of a page accurately preserves the original meaning. The chunking process used in retrieval-augmented generation can distort substance in ways that never appear in any ranking signal. A page that scans well for a human reader can ship as a misrepresented chunk. Pages built with clear semantic structure, short factual sentences, and explicit subject-verb-object phrasing chunk more cleanly.

Source attribution quality

Microsoft calls attribution helpful in traditional search but a core signal in grounding, because not all indexed content carries equal evidentiary weight. A peer-reviewed study, a Wikipedia summary, and a marketing post can all rank in the same SERP without the engine arbitrating which the user should trust. Grounding makes that arbitration on behalf of the user. Author credentials, named sources, and dated references become signals the system actually reads, and pages with structured data and schema markup for AI make those signals machine-legible.

Freshness

Stale content is a ranking problem in search. In grounding, a stale fact produces a misleading response, which is a categorically different failure mode. Pages with visible "last updated" dates, current statistics, and refreshed examples carry more weight in grounding because the cost of citing outdated material is higher when the AI commits to a specific answer.

Coverage of high-value facts

Coverage measures whether the specific facts and sources people ask about are actually available and groundable. A missed document in search is recoverable because alternative results exist. In grounding, a missing fact often means the AI fabricates an answer or abstains. Brands that document the answers buyers actually search for, with named data and direct phrasing, give the system more material to work with.

Contradiction handling

Search engines surface conflicting sources and let humans pick. Grounding systems cannot. Microsoft warns that an AI system silently arbitrating between contradictory sources may confidently assert the wrong thing, which is why Bing's grounding layer treats unresolved contradictions as a reason to abstain. Pages that align with consensus on factual matters and avoid contradicting their own data have an advantage ranking signals never measured.

Why abstention and iterative retrieval matter for content

Abstention is a valid outcome for a grounding system when supporting evidence is missing, stale, or conflicting. Iterative retrieval is the second design difference Microsoft flagged: the system may ask follow-up questions, refine retrieval based on intermediate results, and combine evidence from multiple sources before generating an answer. Errors early in that loop compound through later reasoning steps in ways no human reviewer catches in real time.

Both behaviors mean a page that ranks well in traditional Bing or Google can still be invisible in grounded AI answers if the chunked content fails one of the five measurement areas. Passionfruit's research on AI brand recommendation variability found AI tools produce different recommendation lists more than 99% of the time, so single-snapshot data is unreliable for measuring grounding performance.

How to optimize content for Bing's grounding system

The five measurement areas translate into a short list of practical content moves. Each addresses a specific failure mode the grounding layer evaluates.

Step 1: Audit how your pages chunk

Open your highest-priority pages and read each section as if it stood alone. Sections that depend on context from earlier paragraphs will misrepresent your meaning when chunked. Add self-contained answer blocks of 100-180 words near the top of every section so each chunk carries enough context to be cited accurately. Run priority pages through the GEO checklist for AI search readiness before assuming any page is grounding-ready.

Step 2: Strengthen attribution and authorship signals

Add author bylines with credentials, link to primary data sources by name and date, and make first-hand experience explicit. Generic "industry research shows" phrasing carries less attribution weight than "according to [named source, dated report]." For YMYL categories, credentialed expert review is a hard requirement.

Step 3: Refresh facts and dates on a schedule

Set a quarterly refresh cadence for the top 20% of pages by business value. Update statistics, dates, and examples each cycle. Visible "last updated" dates give the grounding layer a freshness signal it can read directly, and Passionfruit's research on Search Console measurement reliability shows why pairing visible refresh signals with verified measurement data matters in 2026.

Step 4: Cover the high-value facts buyers ask about

Build dedicated sections that answer the specific questions your buyers ask, with concrete data, named sources, and direct phrasing. Avoid burying answers inside long narrative content. Grounding favors structured FAQ sections marked up with FAQPage schema for AI answers, comparison tables, and direct subject-verb-object statements over discursive prose.

Step 5: Eliminate internal contradictions

Run editorial passes that check whether different pages contradict each other on key facts. Pricing, feature lists, claims data, and compliance language should reconcile across the content set. A site that contradicts itself signals weaker evidence than one that aligns.

Win the citation, not just the click

Bing's grounding framework is the most explicit public map of what AI search systems need from a page. The brands compounding AI visibility right now treat grounding signals as the new optimization target. To see exactly which pages on your site are ground-ready and which need restructuring, start with Passionfruit Labs, explore the end-to-end AI search and SEO growth service, or request a quote before competitors close the citation gap. The Passionfruit case studies show the playbook applied across B2B SaaS and consumer brands.

Frequently asked questions

The questions below come up most often when teams start optimizing for Bing's grounding system.

What is grounding in AI search?

Grounding is the process by which an AI system pulls evidence from indexed web content to construct an answer. The system retrieves chunks, evaluates each for evidence quality, and decides whether the available material is strong enough to support the response.

How is grounding indexing different from search indexing?

Search indexing optimizes for which pages a user should visit. Grounding indexing optimizes for which information an AI system can responsibly use to construct a response. Microsoft identifies five divergence areas: factual fidelity, source attribution quality, freshness, coverage of high-value facts, and contradiction handling.

What does abstention mean in Bing's grounding system?

Abstention is when the grounding system declines to answer because evidence is missing, stale, or conflicting. Traditional search does not need this judgment because users evaluate options themselves. Grounding requires it because the AI commits to a single answer rather than presenting alternatives.

Why do my pages get cited less in AI answers than they rank in Bing?

Ranking signals measure relevance for a human reader. Grounding signals measure evidence quality at the chunk level. A page can rank well, then fail grounding because chunks misrepresent the original meaning, attribution is weak, facts are stale, or content contradicts other sources.

Does Bing show citation data for AI-generated answers?

Yes. Microsoft launched the AI Performance dashboard in Bing Webmaster Tools in February 2026, added Grounding Query to Pages Mapping in March 2026, and previewed Citation Share, grounding query intent labels, and GEO-focused recommendations at SEO Week in April 2026.

How does Bing grounding compare to Google's AI search ranking?

Bing's grounding framework is more publicly documented than Google's equivalent layer. Bing has named the five measurement areas, described abstention as a design choice, and built page-level citation reporting in Webmaster Tools. Google has not published the same level of detail, though similar principles likely apply across both grounding systems.