SEO

Join 500+ brands growing with Passionfruit!

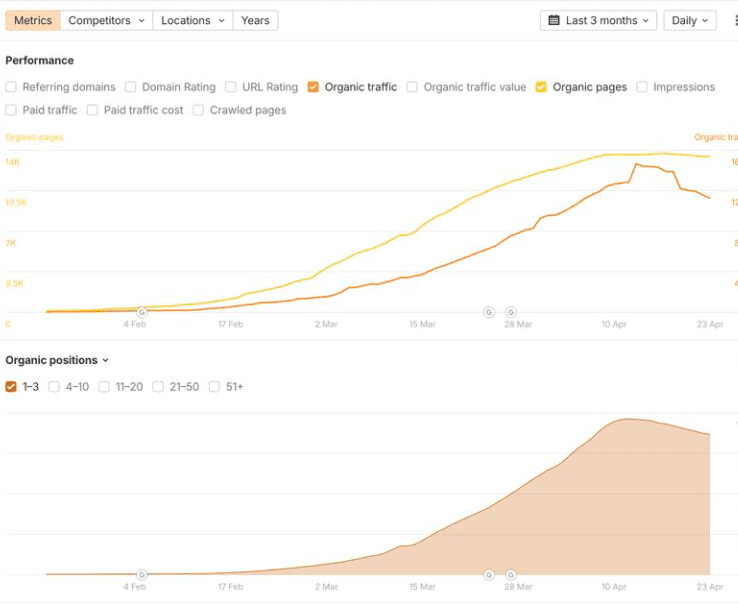

A familiar pattern keeps showing up in scaled-content case studies on X and LinkedIn: a new batch of AI-generated pages launches, traffic spikes for two to three months, then collapses just as fast. Practitioners call it the "Mt. AI" curve. The problem is rarely AI itself. Ahrefs analyzed 600,000 pages and found 86.5% of top-ranking pages contain some AI content, with a near-zero correlation between AI use and ranking position. Google's quality threshold is what kills the long tail of scaled content, and it does so quietly because the freshness boost masks the underlying issue for the first several weeks. Here is how the threshold actually works and what to fix before the next core update closes the recovery window.

What is Google's quality threshold?

Google's quality threshold is the dynamic standard a page must meet to stay in Google's search index and be served in results. The bar shifts daily based on global search demand, the volume of new content being indexed, and the quality of competing pages on the same topic. Pages that fall below the threshold get crawled less frequently, drop from the index, or remain technically indexed but ineligible to rank.

Adam Gent's research at Indexing Insight documented the practical mechanics. Google actively removes content from its index, often within 130 days of the last meaningful crawl, when pages fail to generate engagement signals that justify storage. Indexing has effectively become a proxy for quality, and the same index governs whether a page is eligible to be cited in AI Overviews and AI Mode. A scaled-content site that loses index coverage loses both organic and AI search visibility at once, which is why the GEO checklist for AI search readiness starts with index health.

Why does scaled AI content plateau after the freshness boost?

The plateau happens because the freshness boost and the quality threshold operate on different timelines. Google processes a large URL drop quickly and surfaces new pages for queries that deserve fresh results, which is the spike everyone sees. The threshold evaluation runs slower. Once the novelty fades, Google reassesses each URL on the engagement signals it generated during the boost, and a 2,000-page programmatic launch can absorb crawl budget for the first 60-90 days while the system samples a subset of URLs to decide how much of the wider batch is worth keeping.

If the sample underperforms on dwell time, click-through rate, and downstream engagement, Google retracts resources from the whole batch. The remainder struggles to gain traction because the URL pattern has already failed the threshold check. Sites that build AI search visibility through high-quality content instead of volume tend to avoid the collapse.

How Google evaluates new content at scale

Google handles a large URL drop the same way it handles any inventory expansion: the system samples first, then decides how much of the rest is worth crawling.

Google detects new inventory through sitemaps, internal linking, and Search Console submissions.

The system processes a representative sample of new URLs, often selected by URL pattern such as a subfolder.

Sampled URLs get a freshness boost while engagement signals accumulate.

After 4-12 weeks, Google evaluates engagement against the current threshold for the relevant query categories.

If the sample falls short, crawl frequency drops across the wider batch and pages risk removal under the 130-day rule.

The threshold is dynamic. The bar rises as better content is published in the same query space, so a page that cleared it in January can fall below it by June without changing. Google's official guidance reinforces that automation, including AI, is acceptable when used to create helpful content, with the caveat that content created primarily to manipulate rankings remains a spam violation regardless of production method.

How to keep scaled content above Google's quality threshold

The practical answer is to shift effort from production scale to quality maintenance at scale. Five operational changes do most of the work.

Step 1: Audit the index, not just the rankings

Before optimizing any single page, pull a coverage report from Search Console and segment indexed versus excluded URLs by template or subfolder. The patterns reveal which scaled-content batches Google has already deprioritized. Pair the data with Passionfruit's research on Search Console measurement reliability, which documented a Google logging bug that inflated impression counts for nearly a year. Index coverage is the more reliable threshold signal during periods when impression data drifts.

Step 2: Consolidate thin pages into comprehensive resources

Most scaled-content sites that recover do so by consolidating thin pages into fewer comprehensive resources, then redirecting the originals to the consolidated targets. Improving every thin page in place rarely works because the URL pattern has already been flagged. Cutting page count by 60-80% and rebuilding around fewer resources with first-hand data, named sources, comparison tables, and structured FAQ sections is the recovery pattern that moves index coverage back upward.

Step 3: Layer human editorial judgment on top of AI production

Google's policy line sits between AI as a production assist and AI as the entire product. Sites that survive scaled-content enforcement use AI for outlines, drafts, and structural consistency, then add human review for accuracy, original insight, and topical authority. Author bylines with credentials, named data sources, and specific examples that AI cannot fabricate distinguish your output from commodity content, the inverse of what Google now publicly calls "non-commodity content."

Step 4: Add structured data and clear topical clustering

Pages that publish without AI-friendly schema markup lose the context Google's systems use to evaluate quality fit. Article schema with author credentials, FAQPage schema for AI answers where appropriate, and a clean topical cluster help Google place each URL in the right competitive set. Pages competing in the wrong category fail the threshold for queries they were never the right answer to.

Step 5: Refresh systematically rather than reactively

Content updated within the past three months shows materially higher citation rates than older pages, which makes refresh cadence part of the threshold defense. Set a quarterly refresh schedule for the top 20% of pages by business value and a quality cull every six months for the bottom 10% by engagement. The refresh protects against threshold drift; the cull protects against weak pages dragging down site-wide quality.

Move from production scale to quality maintenance at scale

Scaling content through AI is no longer a strategy on its own. The brands holding their position past the freshness boost treat quality maintenance as the operating motion, with AI as the tool that makes maintenance feasible at scale. To audit which of your pages sit above or below the current quality threshold, start with Passionfruit Labs, explore the end-to-end AI search and SEO growth service, or request a quote before the next core update closes the recovery window. The Passionfruit case studies document the same playbook applied across B2B SaaS and consumer brands.

Frequently asked questions

The questions below come up most often when teams investigate why their scaled AI content stopped performing.

Does Google penalize AI-generated content?

No, not directly. Google's official position is that automation, including AI, is acceptable when used to produce helpful content. Ahrefs analyzed 600,000 pages and found the correlation between AI content percentage and ranking position is 0.011, statistically negligible. The penalty trigger is scaled content abuse: content produced primarily to manipulate rankings.

How do I know if my content has been hit by the quality threshold?

The clearest signals are in Search Console: a sharp drop in indexed page count for a specific subfolder, a rise in "Crawled, currently not indexed" status, or a sudden decline in impressions across pages that share a template. Page-level rank drops without manual action or core update timing usually point to threshold drift rather than a penalty.

How long does the freshness boost last?

The boost is not fixed. Most new URLs see it run 4-12 weeks depending on query category, content novelty, and engagement signals. Pages that accumulate strong engagement during the boost retain visibility. Pages that fail to engage users lose ranking when Google reassesses against the current quality threshold.

Why does scaled content fail even when individual pages are well-written?

Google evaluates URL patterns, not just individual pages. When a representative sample of a scaled-content batch underperforms, crawl resources are pulled from the entire pattern, including pages that were never directly evaluated. A well-written page in a flagged subfolder can fail by association.

Does updating old content help with the quality threshold?

Yes, refreshes can lift content back above the threshold, but only when the refresh adds genuine new value. Cosmetic date changes do not move engagement signals, and the bar is dynamic, so a page that cleared the threshold last year may need substantive new data, examples, or insight to clear it today.

How is the quality threshold connected to AI search visibility?

Google's index is the same gatekeeper for traditional search and AI search features. A page that loses index coverage also loses eligibility to be cited in AI Overviews and AI Mode, which makes the quality threshold a direct driver of AI search visibility on Google surfaces. Pair recovery work with tracking AI brand mentions across ChatGPT, Perplexity, and Google AI to confirm citations rebuild alongside organic ranking.