Is Query Fan-Out a Marketing Buzzword or Something Relevant for Your SEO

After eighteen months of "query fan-out" appearing in keynote slides, agency decks, tool vendor landing pages, and Substack newsletters, the question worth asking is not whether you have heard of it. You have. The question is whether it represents a genuine shift in how marketers should build content, or another repackaged SEO concept wearing a new logo.

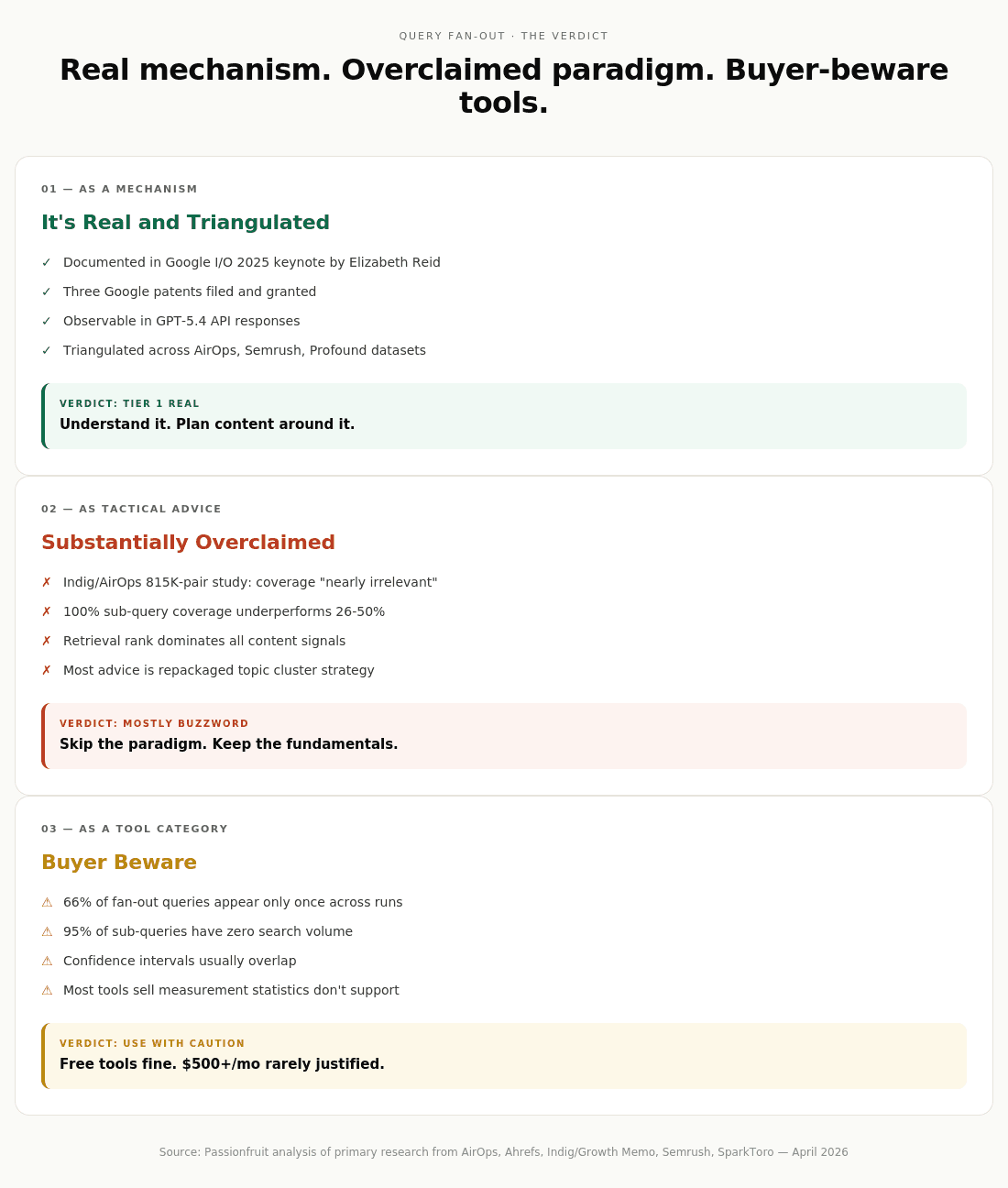

The evidence supports a split verdict.

As a system mechanism, query fan-out is real and triangulated. It is documented in Google's own product announcements, in two separate Google patent filings, in OpenAI's API behavior for GPT-5.4 with web tools enabled, and in large-scale empirical studies. The most rigorous of these, AirOps' analysis of 548,534 pages across 15,000 prompts, found fan-out on 88.6% of queries. When Elizabeth Reid said at Google I/O 2025 that AI Mode "issues a multitude of queries simultaneously," she was describing something AirOps, Semrush, Profound, and independent researchers have all separately measured. This is not industry hallucination.

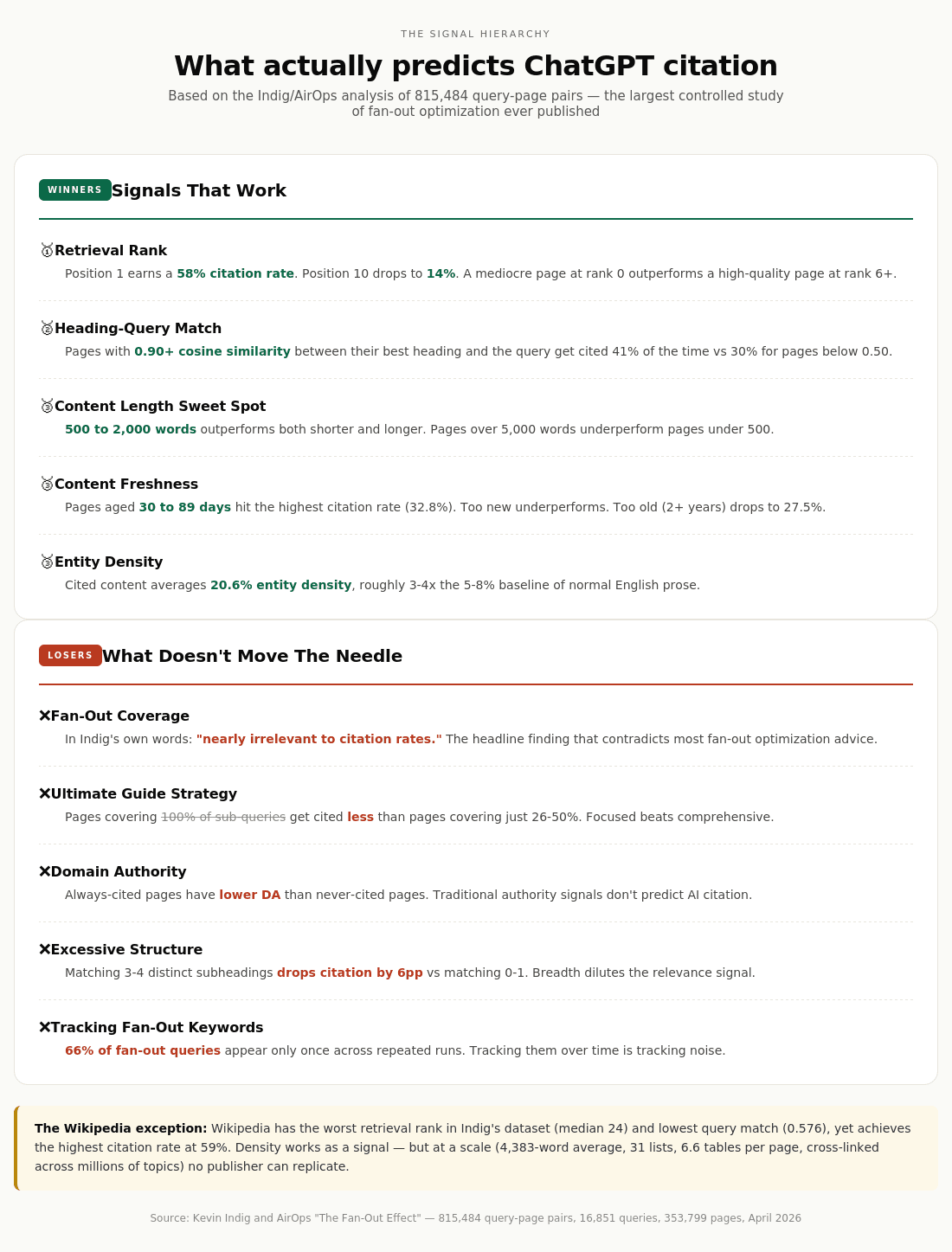

As a tactical optimization paradigm, query fan-out is substantially overclaimed. The most rigorous study ever conducted on fan-out-based content optimization, Kevin Indig and AirOps' April 2026 analysis of 815,484 query-page pairs, found that fan-out coverage is "nearly irrelevant to citation rates." The actual predictors were retrieval rank (58% citation at position 1, 14% at position 10) and heading-query cosine similarity (41% citation at 0.90+ match, 30% below 0.50). Pages covering 100% of a topic's fan-out sub-queries got cited less than pages covering just 26 to 50%. The "ultimate guide" strategy that fan-out optimization advocates push produces the worst citation performance in the dataset.

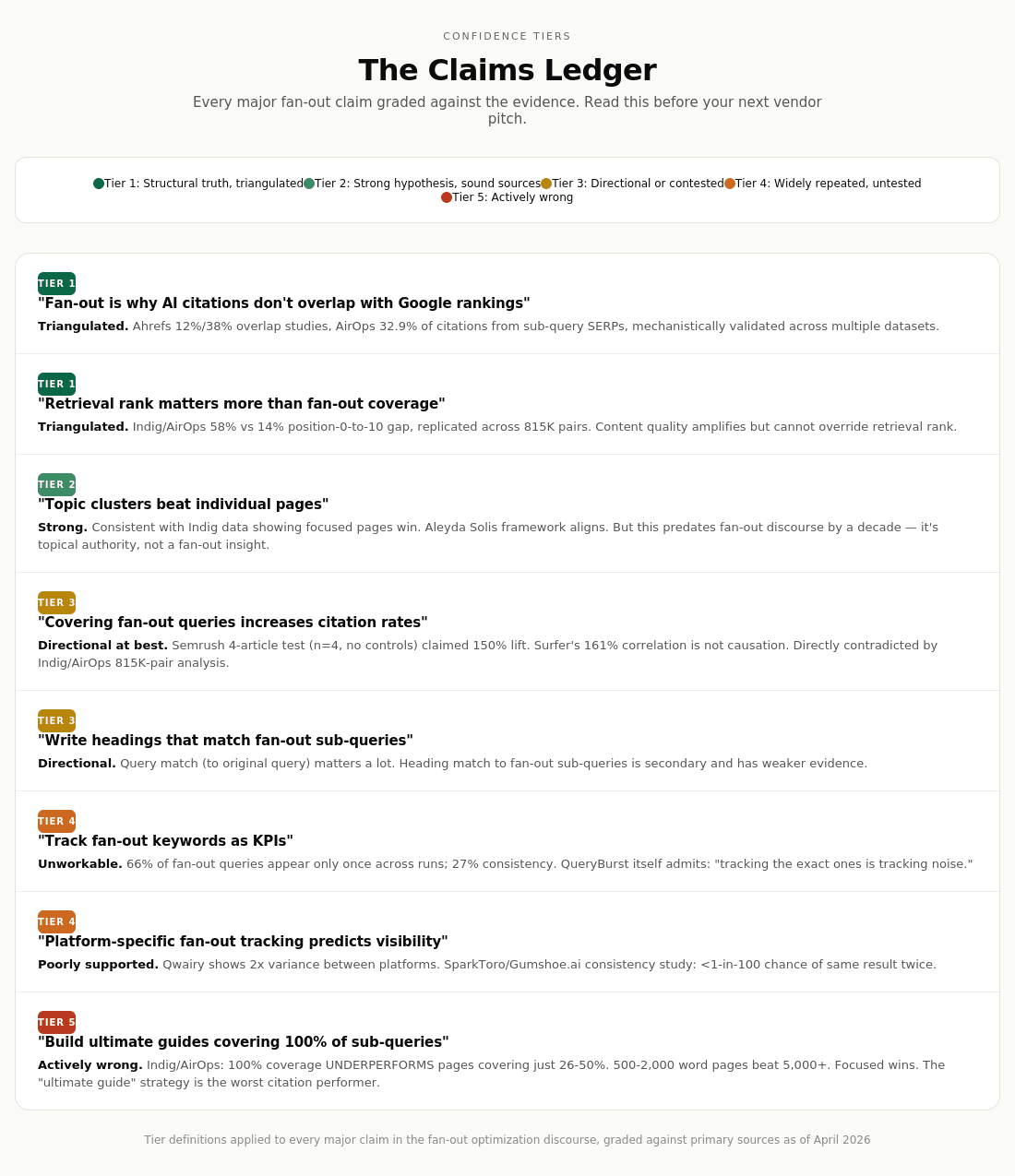

The mechanism is Tier 1 empirical truth. Most of the tactical advice built on top of it is Tier 3 to 4, either directionally right for the wrong reasons or actively contradicted by the best available evidence. A tool market has grown up around measuring something that may not be meaningfully measurable, charging marketers to optimize for a variable that disclosed primary research shows is a weak predictor at best.

The honest read for marketers: understand fan-out as context for why AI citations no longer overlap with Google rankings, but reject the tactical advice telling you to chase fan-out coverage as a distinct discipline. The tactics that actually correlate with AI visibility (strong retrieval rank, direct heading matches, entity density, brand authority, content freshness, technical crawler accessibility) are extensions of good SEO and content fundamentals.

Related reading from Passionfruit:

The Split Verdict at a Glance

The Mechanism Is Real

This is the section of the report where there is no genuine dispute. Fan-out exists, is documented, and is observable across four independent layers of evidence.

First-party disclosure

At Google I/O 2025 on May 20, Elizabeth Reid, Google's VP and Head of Search, described the mechanism directly: "AI Mode isn't just giving you information. It's bringing a whole new level of intelligence to search. Under the hood, Search recognizes when a question needs advanced reasoning. It calls on our custom version of Gemini to break the question into different subtopics, and it issues a multitude of queries simultaneously."

Google's official AI Mode announcement confirms that Deep Search, a more intensive variant, "can issue hundreds of sub-queries for complex questions." In November 2025, Gemini 3's rollout explicitly framed the model as having "stronger reasoning, improved query fan-out."

Patent documentation

Google filed application US20240289407A1 ("Search with stateful chat") describing a system that uses LLMs to generate multiple alternate queries from an original search, what Google internally calls "prompted expansion." A second patent, US12158907B1 ("Thematic Search"), granted in December 2024, describes organizing results into themes and sub-themes with AI-generated summaries, closely paralleling what practitioners now call fan-out. A third patent, US11663201B2 ("Generating query variants using a trained generative model"), filed in 2018 and granted in 2023, outlines the training procedure for the query-expansion models themselves. These are not speculative. They are filed and granted intellectual property.

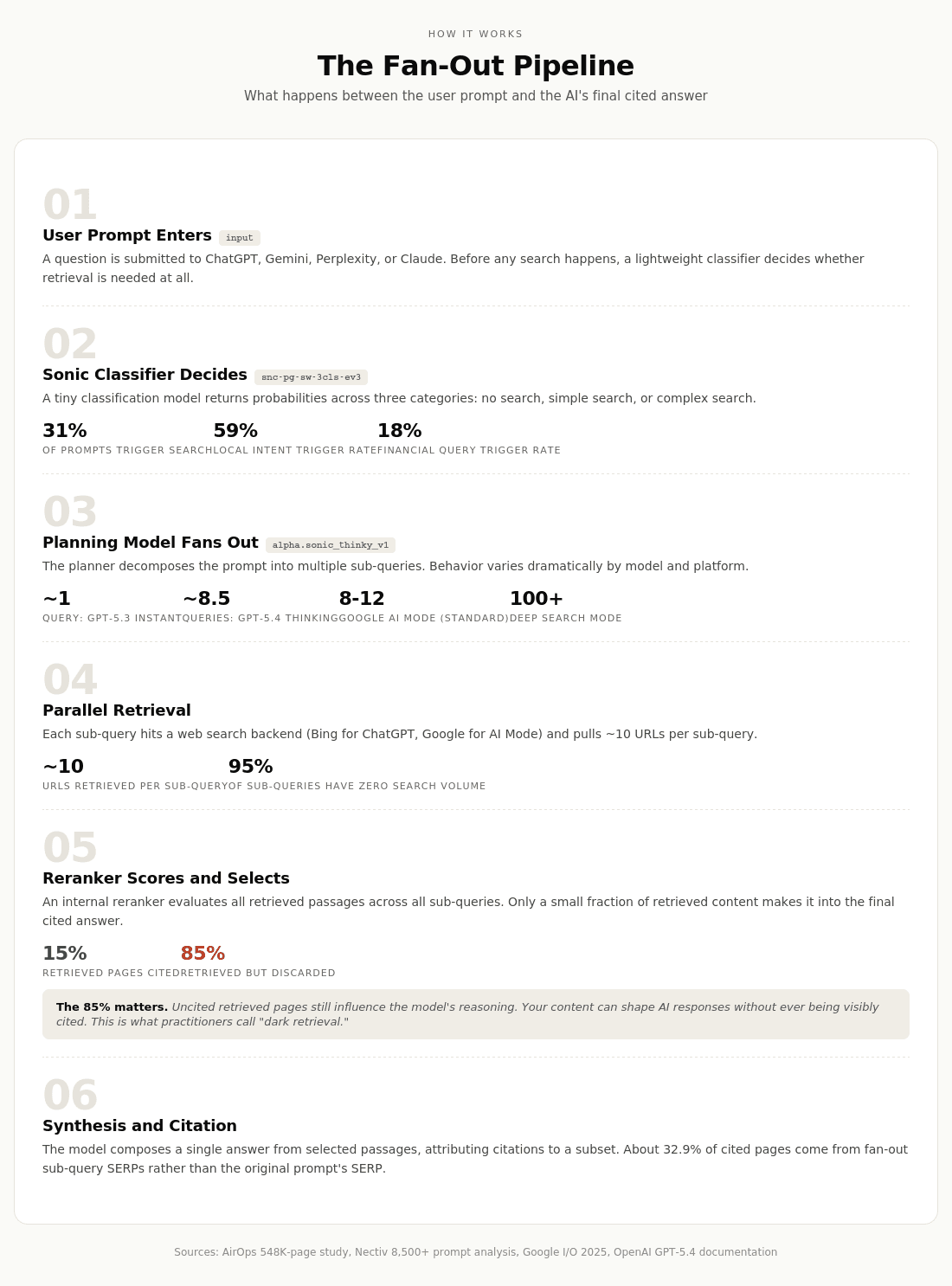

How the fan-out pipeline actually works

Platform-level behavioral evidence

Fan-out is not Google-exclusive. OpenAI's API for GPT-5.4 with web search tools enabled returns fan-out queries, web results, and citations in the response object, a fact confirmed by independent Python scripts from Chris Long and Jérôme Salomon that extract the data. ChatGPT's underlying architecture uses what industry reverse-engineering has identified as a multi-stage pipeline: a "sonic classifier" (snc-pg-sw-3cls-ev3) that decides whether to trigger search, then a planning model (alpha.sonic_thinky_v1) that generates sub-queries. Perplexity and Claude implement equivalent mechanisms, with architectural variations.

Independent empirical confirmation

Multiple research datasets triangulate this. Qwairy's analysis of 102,018 queries across 38,418 user prompts (September to November 2025) found fan-out frequencies varying by provider: Perplexity generates multiple queries only 29.5% of the time (70.5% single-query), while ChatGPT generates multiple queries 67.3% of the time (32.7% single-query). Nectiv's separate analysis found ChatGPT triggers search on roughly 31% of prompts with an average of 2 fan-out queries per query that triggers. iPullRank's December 2025 research documented that Google can fire "hundreds of searches per single user query in AI Mode."

The GPT-5.3 to 5.4 transition provides the cleanest natural experiment:

The verdict on the mechanism is clear: Tier 1 structural truth. Fan-out is real, behaviorally observable, variable across platforms and model versions, and materially reshapes which content gets retrieved. Anyone arguing this is marketing invention is wrong on the facts.

What Fan-Out Actually Does

The next question, and where commercial overclaim begins to creep in, is what this mechanism actually does to content visibility. Several large-scale studies now answer this directly, and together they paint a picture more nuanced than either the buzzword camp or the skeptic camp acknowledges.

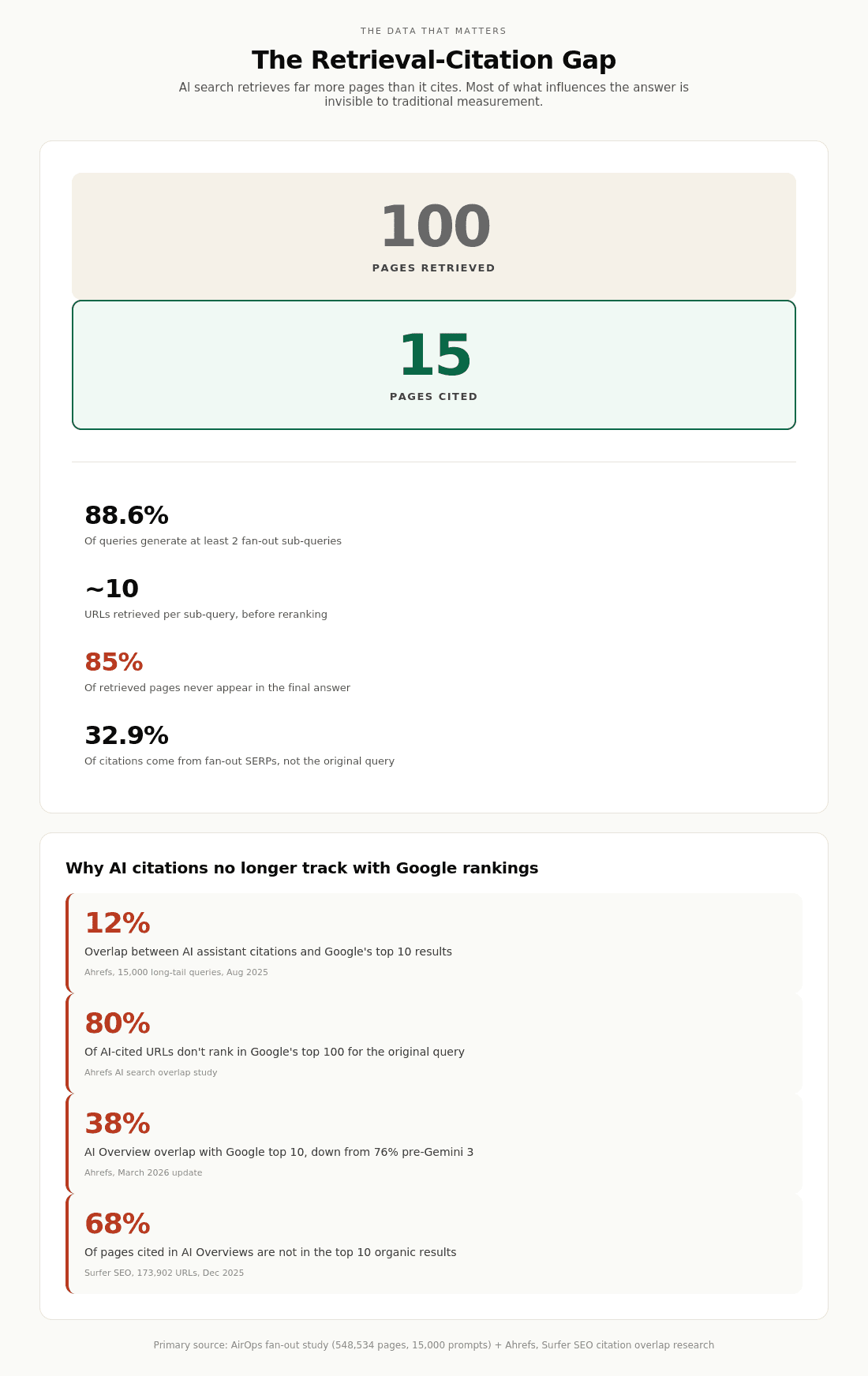

The retrieval-citation gap

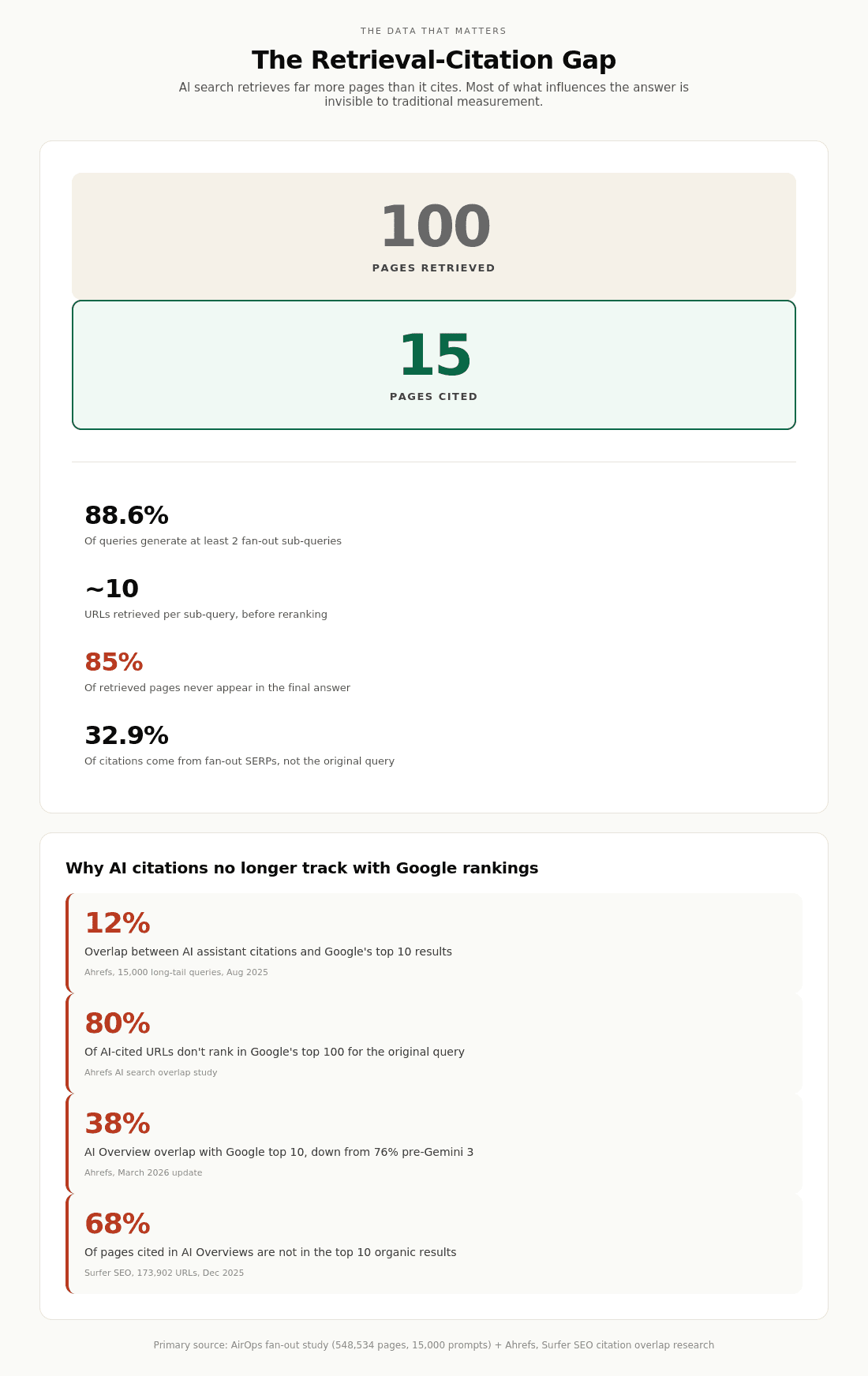

AirOps' 548K-page study ran 16,851 queries through ChatGPT three times each, capturing every fan-out sub-query, every retrieved URL, every citation made, and every page scraped. The key findings:

ChatGPT retrieves approximately 10 URLs per sub-search, reads through them, and then cites only about 15% in the final response:

Roughly 32.9% of cited pages appeared only in SERPs for fan-out sub-queries, not the original prompt. That last finding matters strategically. About a third of citation opportunities are invisible if you only track head terms.

The ranking-citation decoupling

Ahrefs' August 2025 study of 15,000 long-tail queries found only 12% overlap between AI assistant citations and Google's top 10. Eighty percent of cited URLs do not rank in Google's top 100 for the original query. Their March 2026 update on AI Overviews specifically found that top-10 overlap dropped from 76% to 38% after Gemini 3's rollout.

Fan-out queries themselves are unstable

Only 27% of fan-out keywords remain consistent across repeated searches for the same prompt. Sixty-six percent appear only once, according to data aggregated by Passionfruit research in 2026. This means fan-out "keyword lists," the core output of most tools in this space, are snapshots of probabilistic generation, not stable optimization targets.

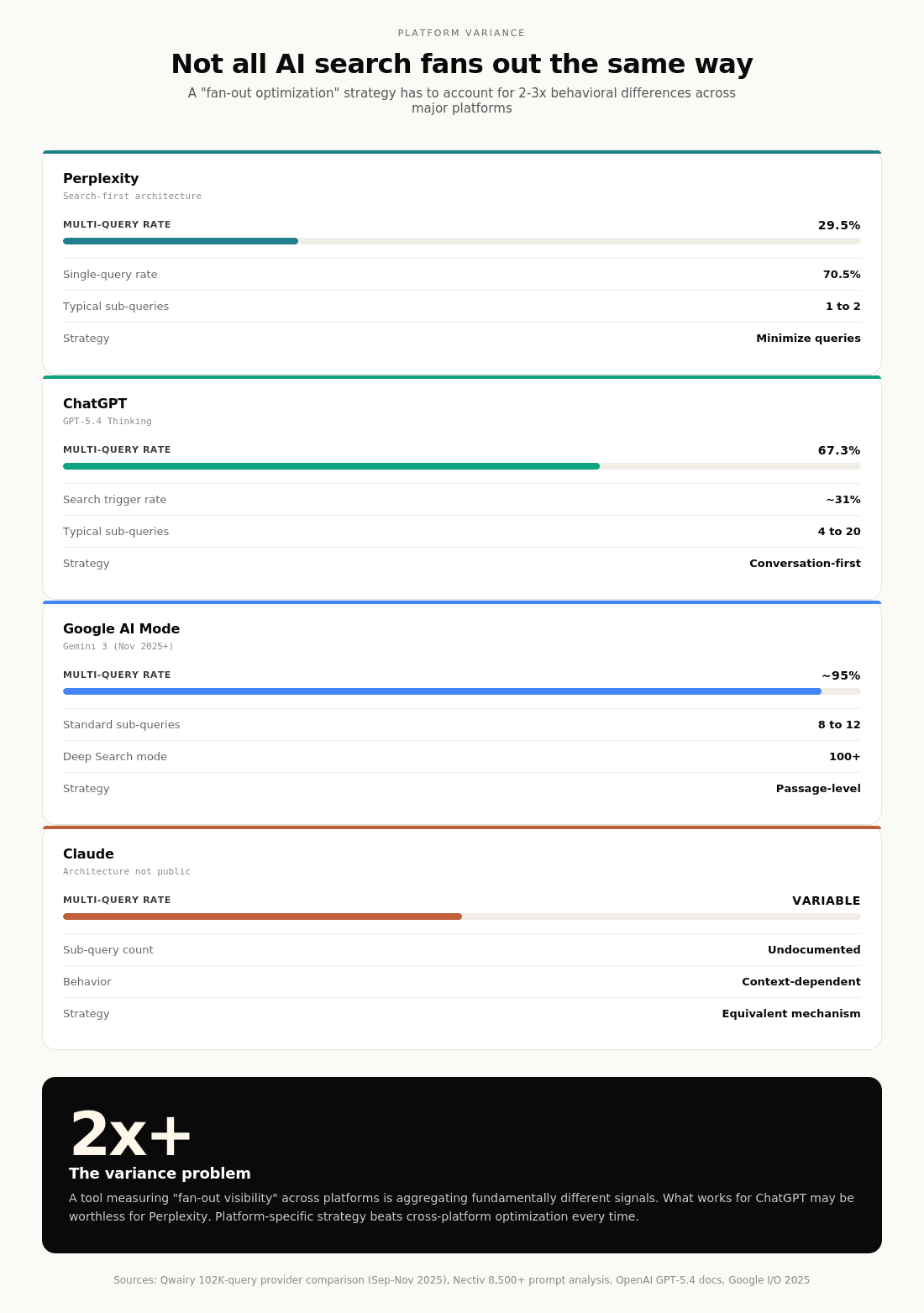

Platform variance is severe

Platform | Multi-Query Rate | Typical Sub-Query Count | Retrieval Strategy |

|---|---|---|---|

Perplexity | 29.5% | 1 to 2 (70.5% single-query) | Search-first, minimize queries |

ChatGPT (GPT-5.4) | 67.3% | 4 to 20 depending on complexity | Conversation-first, exploratory |

Google AI Mode (Gemini 3) | ~95% | 8 to 12 standard; 100s for Deep Search | Passage-level retrieval |

Claude | Variable | Architectural details not public | Equivalent mechanism |

The behavioral data is genuinely Tier 1 evidence that fan-out reshapes retrieval. Where commercial overclaim begins is in the leap from "fan-out exists and changes what gets retrieved" to "marketers should optimize for fan-out as a distinct discipline." That leap is where the evidence breaks.

Related: Multimodal AI Search Optimization: Text, Image & Video Strategies

The Tactical Advice Stress Test

This is where the buzzword problem lives. The industry has generated a coherent-sounding set of tactical recommendations around fan-out optimization. Nearly all of them collapse when tested against the best available evidence.

The canonical playbook

As sold by vendors and agencies, the typical fan-out optimization playbook includes:

Use fan-out tools (Qforia, Semrush Query Fan-Out Analysis, Otterly.AI, Wellows, Locomotive Agency, QueryBurst) to identify sub-queries for your target topics

Build "ultimate guides" or pillar pages that cover every fan-out sub-query

Create topic clusters with one cluster page per sub-query

Write headings that match fan-out query phrasing

Monitor fan-out coverage as a KPI

Track fan-out "visibility" across platforms

The study that breaks the playbook

The most authoritative test of this playbook is Kevin Indig and AirOps' April 13, 2026 study of 815,484 query-page pairs across 16,851 queries and 353,799 pages, the largest controlled analysis of fan-out optimization ever published. The headline finding, in Indig's own words: "Fanout coverage is nearly irrelevant to citation rates."

The Wikipedia exception

Wikipedia deserves attention because it is the one case where comprehensive coverage works. Wikipedia has the worst retrieval rank in Indig's dataset (median 24) and the lowest query match score (0.576), yet achieves the highest citation rate at 59%. Pages average 4,383 words, 31 lists, and 6.6 tables. This is density working as a signal, but at a scale no publisher can replicate. A 3,000-word corporate blog post with 15 subheadings is not Wikipedia and will not get treated like Wikipedia.

The Semrush counterexample and what it actually proves

The contrast with Semrush's own published fan-out experiment is illuminating. Semrush updated four blog articles to address fan-out sub-queries and reported a "150% increase in citations" (from 2 to 5 citations total across the four articles). This is the most-cited "proof" that fan-out optimization works, but the study has methodological problems that Semrush itself partially acknowledged.

Sample size: four articles. Duration: one month. Controls: none. Meanwhile, "brand mentions dropped, share of voice slipped, and AI visibility proved far from predictable" during the same period, which Semrush attributed to "erratic behavior of AI platforms." The lead author's honest summary: "Just know you're playing on unstable ground. Today's AI may cite you, ignore you tomorrow, and reinvent its logic next week."

The claims ledger

Tactical claim | Confidence tier | Evidence |

|---|---|---|

"Covering fan-out queries increases citation rates" | Tier 3 (directional) | Semrush 4-article experiment (n=4, no controls); Surfer 161% correlation claim; directly contradicted by Indig/AirOps 815K-pair analysis showing fan-out coverage is "nearly irrelevant" |

"Build ultimate guides covering 100% of sub-queries" | Tier 5 (actively wrong) | Indig/AirOps: 100% coverage underperforms 26-50% coverage; 500-2,000 words beats 5,000+ |

"Track fan-out keywords as KPIs" | Tier 4 (untested to unworkable) | 66% of fan-out queries appear only once; 27% consistency across runs; QueryBurst directly acknowledges this is "tracking noise" |

"Write headings that match fan-out sub-queries" | Tier 3 (directional) | Query match (to original query) matters; heading match to sub-queries is secondary |

"Topic clusters beat individual pages" | Tier 2 (strong) | Indig's data shows focused pages win; Solis's cluster-based framework is consistent with broader topical authority literature; this predates fan-out discourse |

"Fan-out is why AI citations don't overlap with Google rankings" | Tier 1 (structural) | Ahrefs 12%/38% studies; AirOps 32.9% of citations from sub-query SERPs; mechanistically validated |

"Retrieval rank matters more than fan-out coverage" | Tier 1 (structural) | Indig/AirOps 58% vs 14% position-0-to-10 gap; replicated across 815K pairs |

"Platform-specific fan-out tracking lets you predict visibility" | Tier 4 | Qwairy provider variance data; Fishkin consistency findings apply |

What the tactical advice actually reduces to

The most damning observation is that most fan-out tactical advice reduces to either topic clusters (which predates fan-out terminology by a decade), topical authority (which has been SEO orthodoxy since 2015), or passage-level optimization (which is a natural consequence of BERT-era Google, not AI Mode). The QueryBurst team, despite selling their own fan-out tool, acknowledged this directly: "Any experienced SEO can predict them. Fan-out queries for 'best CRM software' will include pricing comparisons, integration lists, and reviews. This tool systematises what you already know."

Related reading: GEO Prompts That Unlock AI Search Visibility: Implementation Guide

Methodology issues across the category

What the tools can and cannot legitimately do

What they can do:

Surface plausible sub-queries for content planning

Suggest question-based heading structures

Highlight topical coverage gaps relative to competitors

Monitor citation frequency over time with appropriate caveats

What most tools cannot legitimately do, despite often claiming otherwise:

Report stable "fan-out rankings"

Predict which specific fan-out queries will trigger for a given prompt

Guarantee that optimizing for their identified sub-queries will increase citations

Provide measurement that meets basic statistical thresholds for interpretability

The verdict on the tool market is buyer-beware. Qforia, because it is free and open-source, is genuinely useful for research. The enterprise platforms offering more features at $200 to $2,000 per month are selling a real mechanism as a measurable optimization target when what they are actually measuring is a probabilistic retrieval pattern with wide uncertainty bounds.

See Passionfruit's category audit: 10 Tools That Track LLM Brand Visibility and Citations | AEO/GEO Tracking Tools for eCommerce

What Marketers Should Actually Do

Given the evidence, the practical implications are narrower and less exciting than vendor decks suggest, but they are defensible.

The decision tree

The seven signals that actually predict AI citation

Per the Indig and AirOps 815K-pair analysis:

Retrieval rank. The single strongest predictor. Strong traditional SEO for ChatGPT (which primarily retrieves via Bing) and Google AI Mode (which uses Google's index) remains the foundation. This is not a separate discipline from SEO.

Heading-query match. Use H2/H3 headings that directly answer the user's likely question. Cosine similarity above 0.80 roughly doubles citation probability versus below 0.50.

Content structure. Front-load definitive answers in the first 30% of the page (Indig's "ski ramp" finding: 44.2% of ChatGPT citations come from the first third of content). Build entity-rich passages (20.6% entity density in cited content versus 5 to 8% baseline). Definitive language ("X is Y") roughly doubles citation rates over hedging language.

Content length. Aim for 500 to 2,000 words for articles. Longer is not better. Focused beats comprehensive except at Wikipedia scale.

Brand authority and third-party presence. Mentions on Reddit, G2, LinkedIn, Wikipedia, and in published news correlate more strongly with AI citation than backlinks do. Build presence where AI crawlers retrieve from, not just on your own domain.

Content freshness. AirOps' data shows pages aged 30 to 89 days hit the highest citation rate (32.8%). Fresh content (less than 30 days) underperforms at 25.3%; new pages have not built retrieval signals yet. Pages older than 2 years drop to 27.5%. The sweet spot is "recently updated but not brand new."

Technical crawler accessibility. No AI crawler (GPTBot, ChatGPT-User, OAI-SearchBot, ClaudeBot, PerplexityBot, Google-Extended) renders JavaScript. Client-side-rendered content is invisible to AI search. Check your robots.txt configuration for each crawler individually; blocking one does not block the others.

Action-oriented reading: AI Search and How It's Reshaping SEO | AI Search in 2025: Strategies, Tools, and What's Next

The Final Verdict

Query fan-out as mechanism: Tier 1 real. Document it. Understand it. Use it to explain to stakeholders why AI citations no longer track with Google rankings.

Query fan-out as marketing terminology: partially legitimate. It describes a real phenomenon, and the term has stuck in industry discourse because it names something that needed a name. The issue is not the term; it is what is being bolted onto it.

Query fan-out as an optimization paradigm: mostly buzzword. The tactics being sold as "fan-out optimization" (chase sub-queries, build ultimate guides, track fan-out visibility, pay for fan-out analysis tools) are largely repackaged topic cluster and topical authority strategies with a new label that implies more science than exists. The best available controlled research (Indig/AirOps 2026) shows fan-out coverage is nearly irrelevant to citation rates; retrieval rank and heading-query match dominate. The second-best research (Semrush's four-article experiment) is underpowered and the lead author's honest summary was "you're playing on unstable ground." The tools built to measure fan-out visibility rest on probabilistic generation that cannot be tracked with the precision vendors claim.

The honest industry reckoning

"Fan-out optimization" is the latest iteration of a perennial SEO pattern: identify a real mechanism, overclaim actionability, sell tools, repeat when the next mechanism emerges. This happened with semantic search, with entity SEO, with E-E-A-T, with topical authority. Each named a real phenomenon. Each generated a commercial ecosystem. Each produced more tools and fewer causal insights than the marketing suggested. Fan-out is not uniquely dishonest. It is running the same playbook.

What to watch going forward

GPT-5.5 and Gemini 4 behavior changes (both expected in 2026)

Academic controlled experiments isolating fan-out effects (currently absent from the literature)

Any first-party disclosure from OpenAI, Google, or Anthropic about retrieval candidate sets and reranker signals

If a lab publishes evidence that specific fan-out tactics cause measurable citation changes with proper controls, the verdict updates. Until then, the evidence supports the split verdict: mechanism real, optimization paradigm oversold.

Bottom line for marketers making resource allocation decisions today

Invest in content and brand fundamentals that correlate with AI visibility across all studies. Skip tools that sell measurement the underlying statistics do not support. Treat "query fan-out" as useful vocabulary, not a mandate to purchase a new platform. If someone tells you your competitors are "winning at fan-out optimization" and you are losing, ask them for the controlled experiment showing causal lift. If they cannot produce it, they are selling buzzword.

Fan-out is real. Fan-out optimization, as currently packaged and sold, mostly is not.

Keep Reading

From the Passionfruit research library:

AI Search vs Traditional Clicks: What 2025 Data Really Shows — Why AI visitors convert 23x better despite being less than 1% of traffic

Why AI Answers Beat SERPs Every Time — The mindset shift from ranking to being cited

How to Search with AI: A Complete Guide for 2025 — Foundational overview of the new search landscape

GEO Prompts That Unlock AI Search Visibility — Actionable prompts and workflows

10 Tools That Track LLM Brand Visibility — Our category audit

Multimodal AI Search Optimization — Text, image, and video strategies

AEO/GEO Tracking Tools for eCommerce — Platform comparisons with methodology notes

Explore Passionfruit:

Our SEO + GEO Service — End-to-end AI search and SEO growth, built on evidence rather than hype

Passionfruit Labs — Self-serve AI visibility tracking tool

Sources

Report based on primary research from:

Aggarwal et al. (KDD 2024, arXiv 2311.09735)

Google I/O 2025 announcements (Elizabeth Reid)

Google patents US20240289407A1, US12158907B1, US11663201B2

OpenAI GPT-5.4 documentation (March 2026)

Kevin Indig and AirOps "The Fan-Out Effect" (April 2026, 815,484 query-page pairs)

Kevin Indig "Science of How AI Pays Attention" (February 2026, 1.2M ChatGPT answers)

Ahrefs AI citation overlap studies (August 2025, March 2026)

AirOps fan-out retrieval study (548,534 pages)

Semrush fan-out optimization experiment (2025)

Qwairy 102K-query provider comparison (Q3 2025)

SparkToro + Gumshoe.ai consistency study (January 2026)

Sielinski "Quantifying Uncertainty in AI Visibility" (arXiv 2603.08924, March 2026)

Surfer SEO 173,902 URL analysis (December 2025)

Nectiv 8,500+ prompt analysis

Writesonic GPT-5.4 behavior study

iPullRank Qforia tool documentation

Vendor documentation from Semrush, Otterly.AI, Wellows, QueryBurst, Locomotive Agency, WordLift

Commentary from Aleyda Solis, Marie Haynes, Mike King, Kevin Indig, Rand Fishkin, and Barry Schwartz

Published by Passionfruit — SEO, GEO, and AI search optimization backed by evidence, not hype.