Why AI Citations Might not be the Best Visibility Metric to track for AI Search

Why $100M+ in AI Tracking Spend Is Built on Broken Metrics, and What Informed Brands Are Doing Instead

Your AI visibility vendor just sent you a dashboard showing your brand ranks #4 in ChatGPT for "best B2B marketing platform." Your competitor ranks #2. You're about to brief your team on closing the gap.

Before you do, run that same prompt yourself. Then run it again. And again.

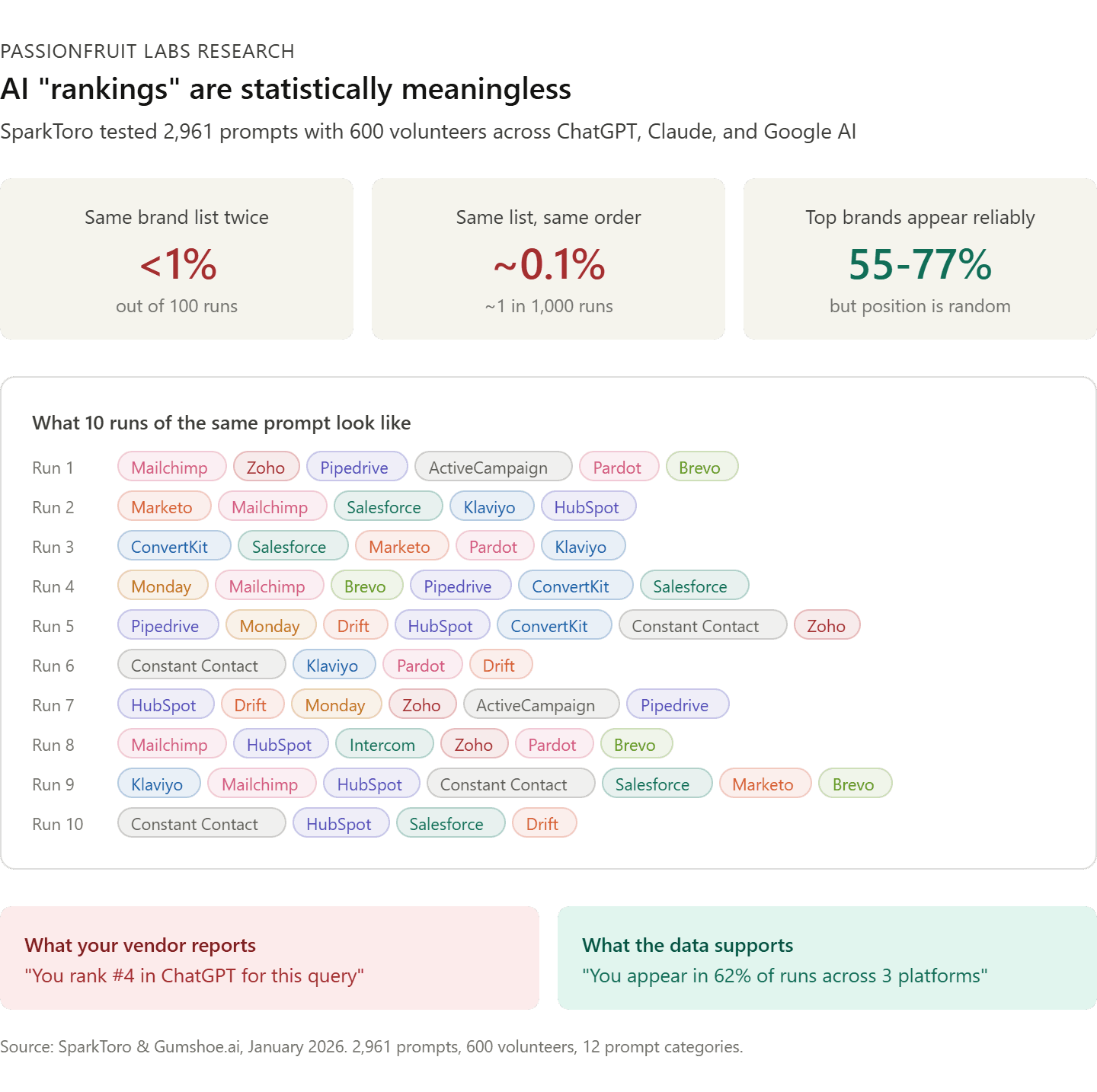

You will get a different list of brands nearly every time. Different brands. Different order. Different number of results. SparkToro tested this with 600 volunteers across 2,961 prompts. The odds of getting the same brand list twice from ChatGPT? Less than 1 in 100. The same list in the same order? 1 in 1,000.

That "#4 ranking" your vendor reported was a snapshot of a single run of a single prompt on a single platform. It is not a position. It is not a trend. It is a random sample of one from a distribution that changes with every query.

Our review examines the evidence behind AI citation metrics, synthesizing 25+ major research efforts from the past 90 days covering hundreds of millions of citations across ten AI platforms. The findings are clear: the measurement infrastructure the industry has built is not measuring what it claims to measure. And the companies that understand this first will have a significant strategic advantage over those still chasing phantom rankings.

What We Found

We reviewed every major AI citation and visibility study published between January and March 2026. The combined dataset covers 2.2M+ ChatGPT responses, 548,000+ retrieved pages, 325,000+ prompts, 89,000+ LinkedIn URLs, 680M+ citations tracked by Profound, 23,000+ branded citations analyzed by Omniscient Digital via Peec AI, 1M+ AI Overviews studied by Writesonic, 615x platform variance documented by Superlines, controlled experiments with 600+ human volunteers, and fresh consumer behavior data from Similarweb covering 113 brands across 6 sectors. These are the six findings that matter most for B2B SaaS marketing leaders.

Finding 1: AI "rankings" are statistically meaningless

SparkToro and Gumshoe.ai ran the largest public test of AI recommendation consistency ever conducted. 600 volunteers. 12 prompt categories. 3 platforms (ChatGPT, Claude, Google AI). Nearly 3,000 total runs.

The result: less than 1 in 100 chance of the same brand list appearing twice. Less than 1 in 1,000 chance of the same list in the same order.

The top brands in each category did appear reliably across many runs (55-77% of the time for leaders like Sony, Bose, and Apple in headphones). But their position within the list was effectively random. Rand Fishkin's conclusion was direct: "any tool that gives a 'ranking position in AI' is full of baloney."

What this means for you: If your AI tracking vendor reports ranking positions, you are paying for noise. Visibility percentage (how often you appear across 60-100+ runs) is the only citation metric with statistical validity. If your vendor runs each prompt fewer than 60 times before reporting, the data is not meaningful.

Finding 2: Being cited is not the same as being recommended

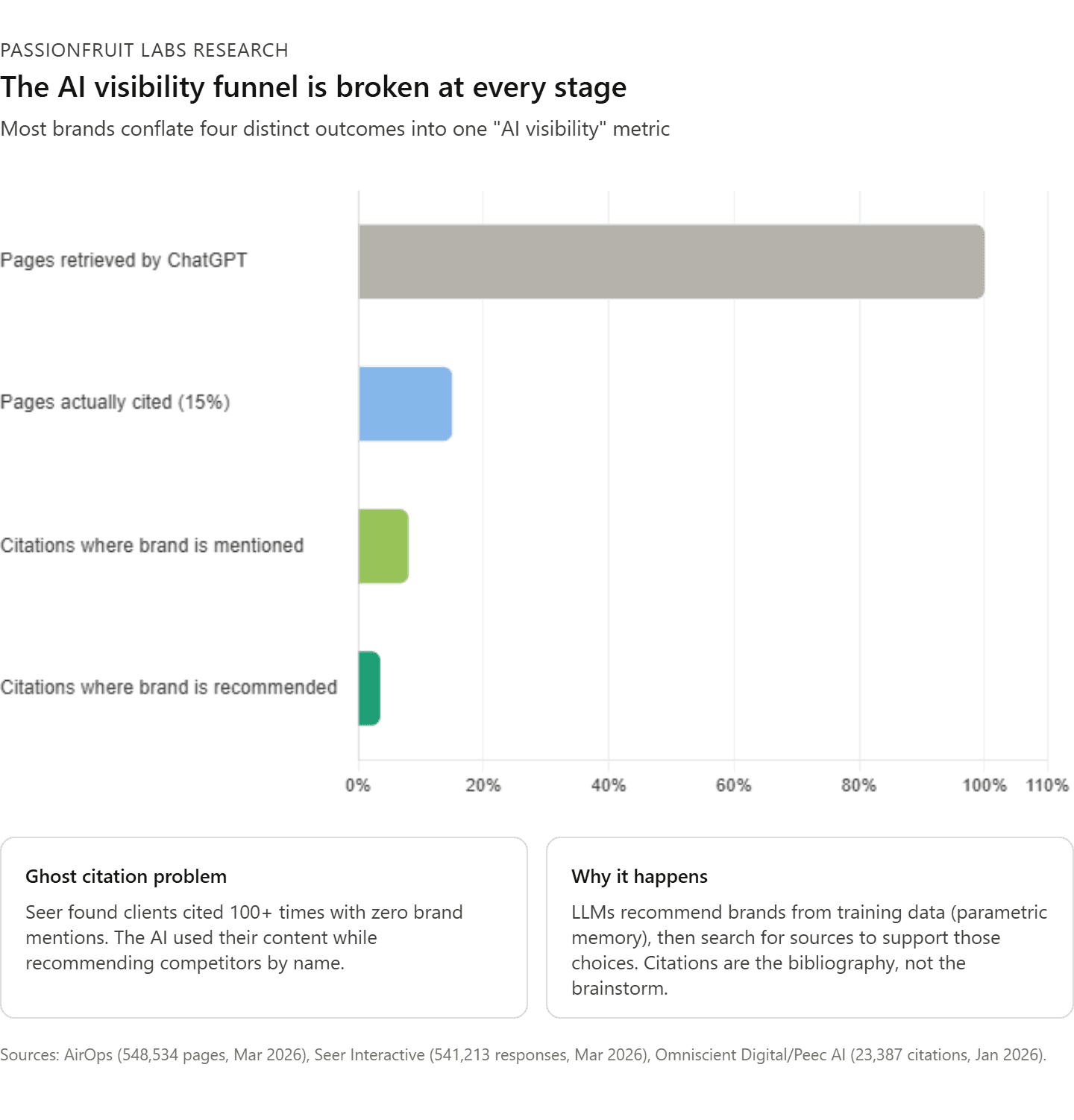

Seer Interactive analyzed 541,213 LLM responses across 20 brands, 6 AI platforms, and 5 funnel stages. They discovered what they call "ghost citations": your URL appears as a source in the response, but your brand is never mentioned or recommended. The AI used your content as reference material while recommending your competitor by name.

In one case, a client's blog post was cited over 100 times in 25 days with zero brand mentions. Every citation was a footnote supporting a factual claim. The AI extracted the insight but not the brand. The client modified the content to embed brand language. After 29 days, brand mentions remained at zero.

Seer's hypothesis, supported by six independent behavioral tests across 362,388 responses: citations are post-hoc. The LLM decides which brands to recommend first (from training data), then searches for sources to support those choices. The citation is the bibliography, not the brainstorm.

What this means for you: A rising citation count may mean your content is becoming more useful to AI systems while your competitors capture the actual recommendations. If you are not tracking the distinction between citations (URL linked), mentions (brand named), and recommendations (brand actively suggested), you are measuring the wrong thing.

Finding 3: 85% of content AI retrieves is never shown to users

AirOps analyzed 548,534 pages retrieved by ChatGPT across 15,000 prompts. Only 15% of those pages appeared as citations in the final response. The other 85% were found, evaluated, and discarded.

This means a brand can be technically discoverable by ChatGPT and still have zero visible presence in the answer the user actually reads. AirOps' 2026 State of AI Search report adds further context: roughly 60% of AI Overview citations come from URLs not ranking in the top 20 organic results. And pages ranking #1 on Google are cited by ChatGPT just 43.2% of the time, which is 3.5x higher than pages outside the top 20, but still means more than half of top-ranked pages go uncited.

The study also revealed that ChatGPT expands most prompts with follow-up "fan-out" searches. 89.6% of prompts triggered two or more internal searches, expanding 15,000 original prompts to 43,233 queries. And 95% of those fan-out queries had zero monthly search volume by any traditional keyword metric.

Writesonic's own research on 1M+ AI Overviews confirms the pattern from a different angle: there is an 81.1% chance that at least one URL from Google's top 10 will be cited in an AI Overview, but ranking #1 gives only a 33.07% chance of citation. The relationship between SERP position and AI citation exists, but it is far weaker and less predictable than the industry assumes.

What this means for you: The most valuable citation opportunities are invisible to standard keyword tracking tools. Retrieval does not equal citation. Discovery does not equal visibility. If your AI strategy is built on the assumption that ranking well for known keywords translates to AI citations, you are optimizing for a fraction of the actual search surface.

Finding 4: The citations themselves are frequently wrong

Nature Communications published a peer-reviewed study evaluating 7 LLMs on 800 medical questions with 58,000 statement-source pairs. Between 50% and 90% of LLM responses were not fully supported by the sources they cited. Even GPT-4o with Web Search had approximately 30% of individual statements unsupported by their own citations. Meanwhile, 90%+ of answers were presented as "very confident."

This is not limited to medicine. The structural problem applies everywhere: LLMs generate plausible text and then attach sources that seem relevant, without verifying that the source actually supports the specific claim.

What this means for you: Tracking citation frequency without evaluating citation accuracy creates a false sense of measurement rigor. Your brand could be cited frequently in contexts that misrepresent your product, amplify outdated information, or contradict your positioning. Seer Interactive documented exactly this: a client's LLM-generated brand description included "high account manager turnover" sourced from a single outdated review, repeated in 67 out of 1,152 branded prompt outputs over three months.

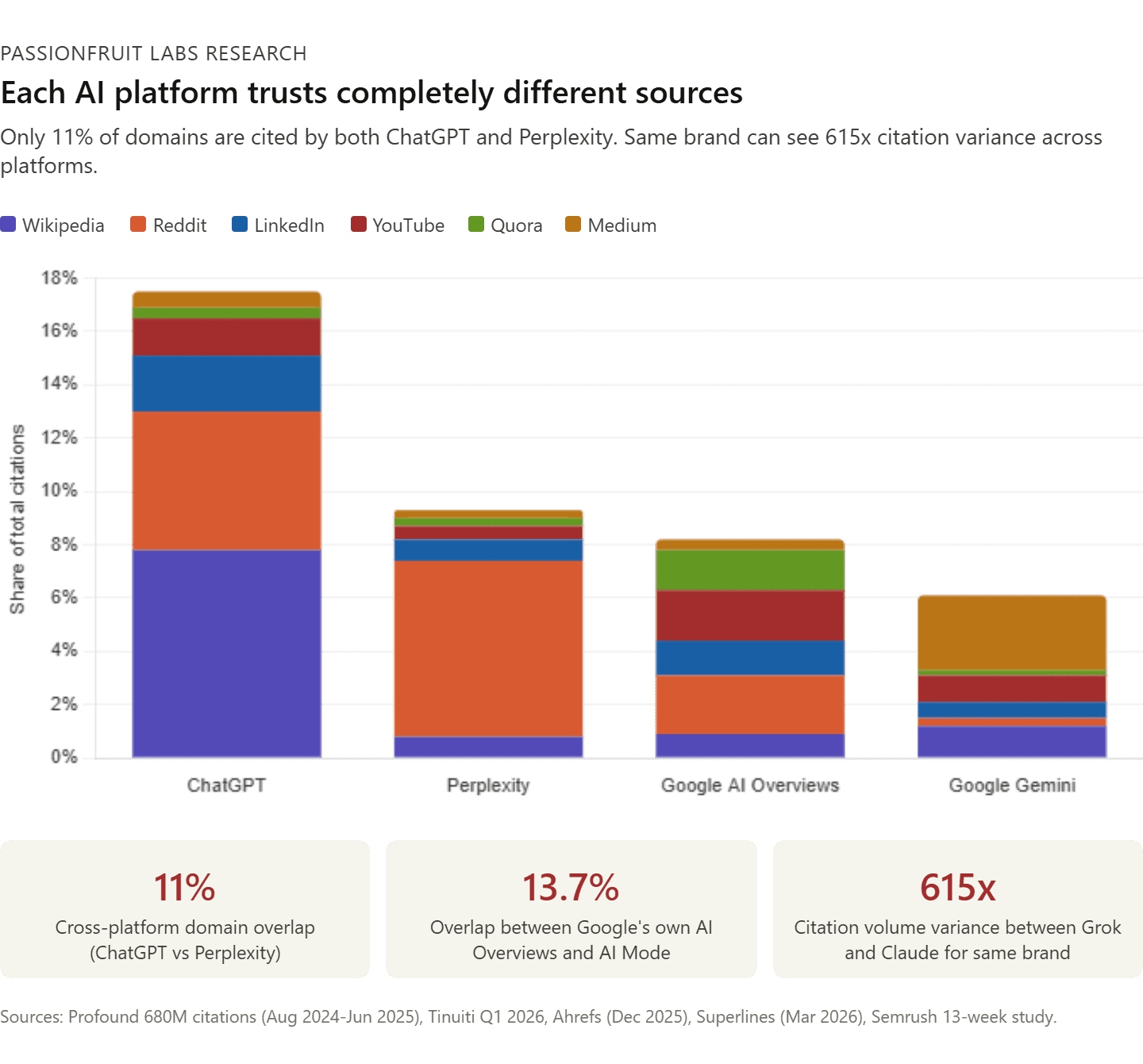

Finding 5: Each AI platform uses completely different sources

This is where measurement breaks down at the platform level. Multiple independent datasets now quantify the scale of disagreement between AI systems.

The cross-platform gap is massive. Only 11% of domains are cited by both ChatGPT and Perplexity (Ahrefs). Even within Google's own products, only 13.7% of citations overlap between AI Overviews and AI Mode. Superlines' March 2026 data found the same brand can see citation volumes differ by 615x between Grok and Claude. This is not a rounding error. It is a structural feature of how different AI architectures select sources.

Profound's 680-million-citation dataset (August 2024 to June 2025) provides the most granular platform-by-platform breakdown available:

ChatGPT: Wikipedia accounts for 7.8% of all citations. Among its top 10 sources, Wikipedia holds 47.9% of relative share. Commercial (.com) domains dominate at over 80% of all citations.

Perplexity: Reddit is its leading source at 6.6% of total citations, with a relative share of 46.7% among its top 10. In January 2026, 24% of all Perplexity citations came from Reddit alone (Tinuiti Q1 2026).

Google AI Overviews: More distributed, with Reddit (2.2%), YouTube (1.9%), Quora (1.5%), and LinkedIn (1.3%) all prominent. Reddit accounted for 44% of all social citations.

Google Gemini: Strong preference for Medium and first-party websites, with Reddit representing a much smaller share (0.1% of responses).

Microsoft Copilot: Shows distinctive citation preferences, often surfacing sources that do not appear in Google or ChatGPT results at all.

Tinuiti's Q1 2026 AI Citation Trends Report, tracking high commercial-intent prompts across nine verticals and seven AI platforms over four months, concluded that "there is no universal top source. There are only patterns shaped by intent, platform, and category."

Reddit's concentrated influence on B2B SaaS: Reddit citation share grew at least 73% across commercial categories between October 2025 and January 2026 (Tinuiti). But when LLMs do cite Reddit, it is increasingly the only source: sole-source citations rose 31% (Conductor). Profound's data shows that citation rates for positive (5%) and negative (6.1%) brand sentiment on Reddit are nearly identical. LLMs do not filter for constructive feedback. They index raw, unmoderated opinion with a slight bias toward negative experience reports. A SaaS company that monitors its Gemini presence and sees no Reddit influence may be entirely unaware that ChatGPT is assembling its product evaluation from three-year-old subreddit threads.

LinkedIn's rapid ascent: Between November 2025 and February 2026, LinkedIn surged from outside the top 20 to approximately the #5 most-cited domain on ChatGPT (Profound, 1.4M citations tracked). Profound ranked it as the #1 most-cited domain for professional queries across all six major AI platforms. Semrush analyzed 325,000 unique prompts and identified 89,000 unique LinkedIn URLs being cited. 95% of cited LinkedIn content comes from original posts, not reshares. Articles between 800 and 1,200 words perform best.

YouTube now appears in 16% of LLM answers. YouTube mentions and branded web mentions are the top factors correlating with AI brand visibility across ChatGPT, Google AI Mode, and AI Overviews (Ahrefs, December 2025).

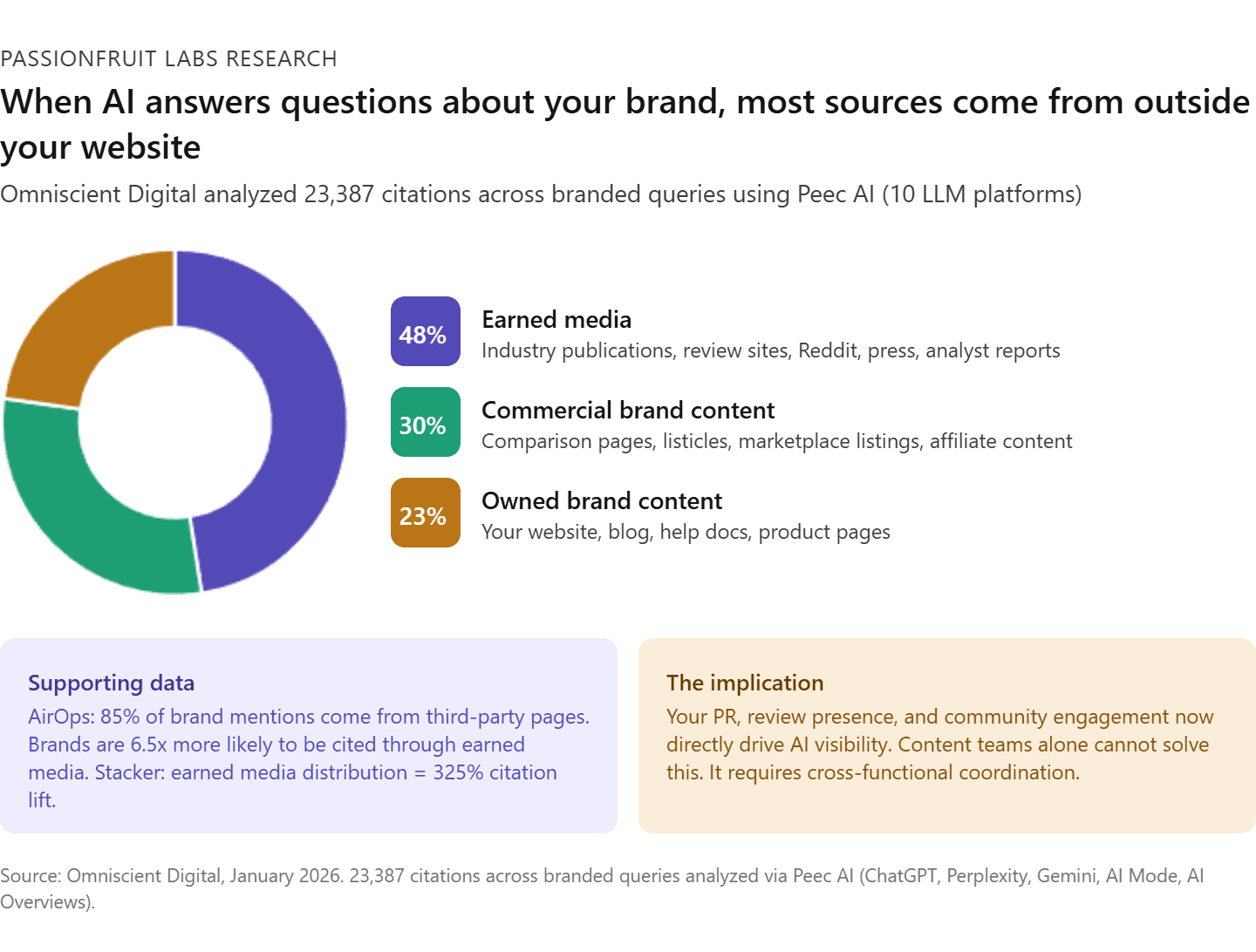

Where citations actually come from: the Omniscient/Peec AI analysis: Omniscient Digital analyzed 23,387 citations across hundreds of branded queries using Peec AI (which tracks 10 LLM platforms and distinguishes between content that was "used" to inform an answer versus content that was explicitly "cited" with a URL). For branded queries, earned media sources account for 48% of all citations, commercial brand content makes up 30%, and owned brand content holds just 23%. When someone asks an AI about your brand, most of what it references comes from outside your website.

What this means for you: A single-platform citation score is not a visibility score. It is a partial signal from one system that disagrees with every other system 89% of the time. If your tracking vendor reports a composite "AI visibility" number, ask how they weight across platforms. If they cannot explain it, the number is arbitrary. Peec AI's distinction between "used" and "cited" content points to a deeper problem: many tracking tools cannot tell you whether your content informed an answer (invisible influence) or was visibly attributed (measurable citation). Both matter, but they require different measurement approaches.

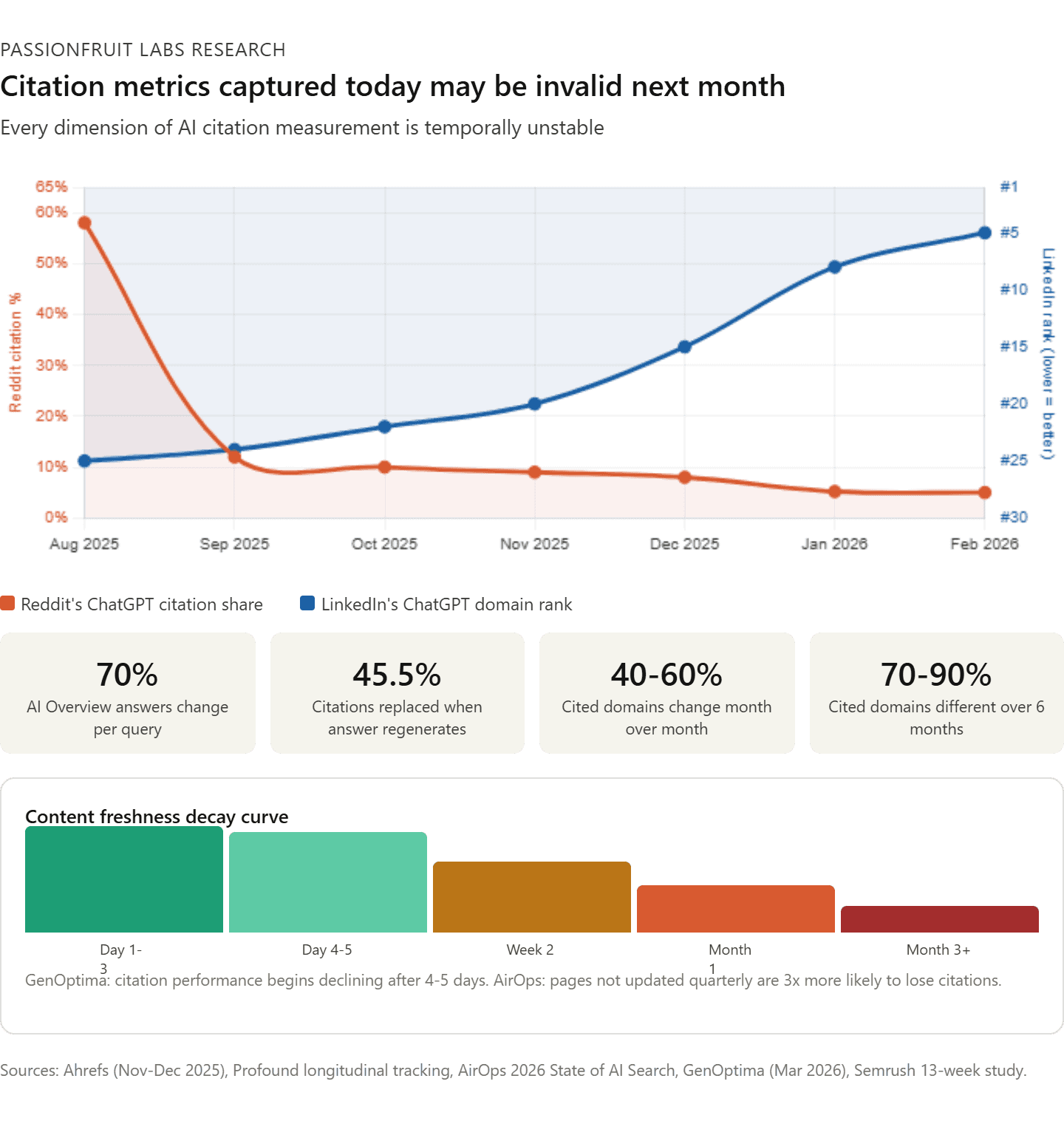

Finding 6: Citation patterns are temporally unstable

Ahrefs found that AI Overview content changes 70% of the time for the same query. When it generates a new answer, 45.5% of citations get replaced with new ones.

Profound's longitudinal tracking shows 40-60% of cited domains change month over month. Over six months, 70-90% of cited domains are completely different. AirOps found only 30% of brands remained visible between consecutive AI answers, and just 20% held presence across five consecutive runs. AirOps' 2026 State of AI Search report quantified the freshness requirement: pages that go more than three months without an update are over 3x more likely to lose visibility compared with recently refreshed pages. More than 70% of all pages cited by AI have been updated within the past 12 months.

GenOptima's monitoring data adds precision to the decay curve: newly published content can begin generating AI citations within three to five days, but citation performance typically begins declining after four to five days without content updates, a pattern consistent across all six major AI platforms they track. The most visible brands in competitive categories publish two or more structured content pieces per week.

The most dramatic example of platform-level instability: ChatGPT cited Reddit in nearly 60% of responses in early August 2025. By mid-September, that number collapsed to around 10% (Semrush 13-week tracking study). One platform-level change wiped out years of Reddit optimization for brands that had built their AI strategy around it. ChatGPT also dropped brand mention counts from six to seven per answer down to three to four after an October 2025 algorithm update (Profound).

This is not just a measurement problem. It is becoming a channel-scale problem. Conductor's 2026 benchmarks found AI referral traffic accounts for 1.08% of all website traffic, growing roughly 1% month over month, with ChatGPT driving 87.4% of that traffic. Similarweb's 2026 GenAI Brand Visibility Index found that 35% of US consumers now use AI tools for product discovery versus 13.6% who use search engines. At the evaluation and comparison stage, AI holds a 32.9% to 15% advantage. The audience is real and growing. The measurement is not keeping up.

What this means for you: Any AI visibility metric captured today may be invalid next month. Not because your strategy changed, but because the platform's retrieval behavior changed. This is not a measurement limitation you can solve with better tools. It is a structural feature of how these systems work. A measurement cadence of anything less than monthly, across multiple platforms, with 60+ prompt runs per query, is not capturing meaningful signal.

The Expert Disagreement: What Actually Drives AI Visibility?

The industry's leading researchers do not agree on what signals matter most for AI citation and recommendation outcomes. These disagreements are not academic. They directly determine where you invest.

The brand signal camp

Growth Memo's analysis found brand popularity (measured by search volume) has the highest correlation with LLM mentions (0.334 coefficient). Digital Bloom confirmed this. Seer Interactive found brand search volume is the second-strongest non-organic ranking signal (0.18 correlation). The argument: invest in brand, and AI visibility follows.

The content extractability camp

The Princeton/Georgia Tech GEO study found structured content increases AI visibility by up to 40%. Adding authoritative outbound citations produced a 115% visibility increase for mid-ranked sites.

AirOps' 2026 State of AI Search report grounds this with large-scale structural data: 68.7% of pages cited in ChatGPT follow logical heading hierarchies (H1, H2, H3 in proper sequence). 87% of cited pages use a single H1 as the primary anchor. Pages combining schema markup and list formatting show 2.8x higher citation rates. About 61% of cited pages use three or more schema types, correlating with a 13% higher citation likelihood. Pages with FAQ schema appear in 10.5% of cited pages. Nearly 80% of pages cited by ChatGPT include lists to structure key information.

BrightEdge data adds supporting evidence: sites implementing structured data and FAQ blocks saw a 44% increase in AI search citations. Pages updated within 60 days are 1.9x more likely to appear in AI answers. Websites with author schema are 3x more likely to appear.

The argument: make your content easy for AI to parse and extract, and citations follow regardless of brand strength.

The earned media camp

AirOps found 85% of brand mentions came from third-party pages, not owned domains. Brands are 6.5x more likely to be cited through third-party sources. AirOps' 2026 State of AI Search report reinforced this: about 48% of citations come from community platforms like Reddit and YouTube.

Omniscient Digital's analysis of 23,387 citations using Peec AI broke this down further for branded queries specifically: earned media accounts for 48% of all citations, commercial brand content makes up 30%, and owned brand content holds just 23%. The implication is that when someone asks an LLM about your brand, most of what it references comes from outside your website.

Stacker showed earned media distribution increases AI citations by up to 325%. SE Ranking found review platform presence (G2, Trustpilot, Capterra) gives 3x higher ChatGPT citation rates. Domains with significant Reddit and Quora presence have approximately 4x higher citation rates. Sites with over 32K referring domains are 3.5x more likely to be cited by ChatGPT than those with up to 200 referring domains.

The argument: AI systems trust what others say about you, not what you say about yourself.

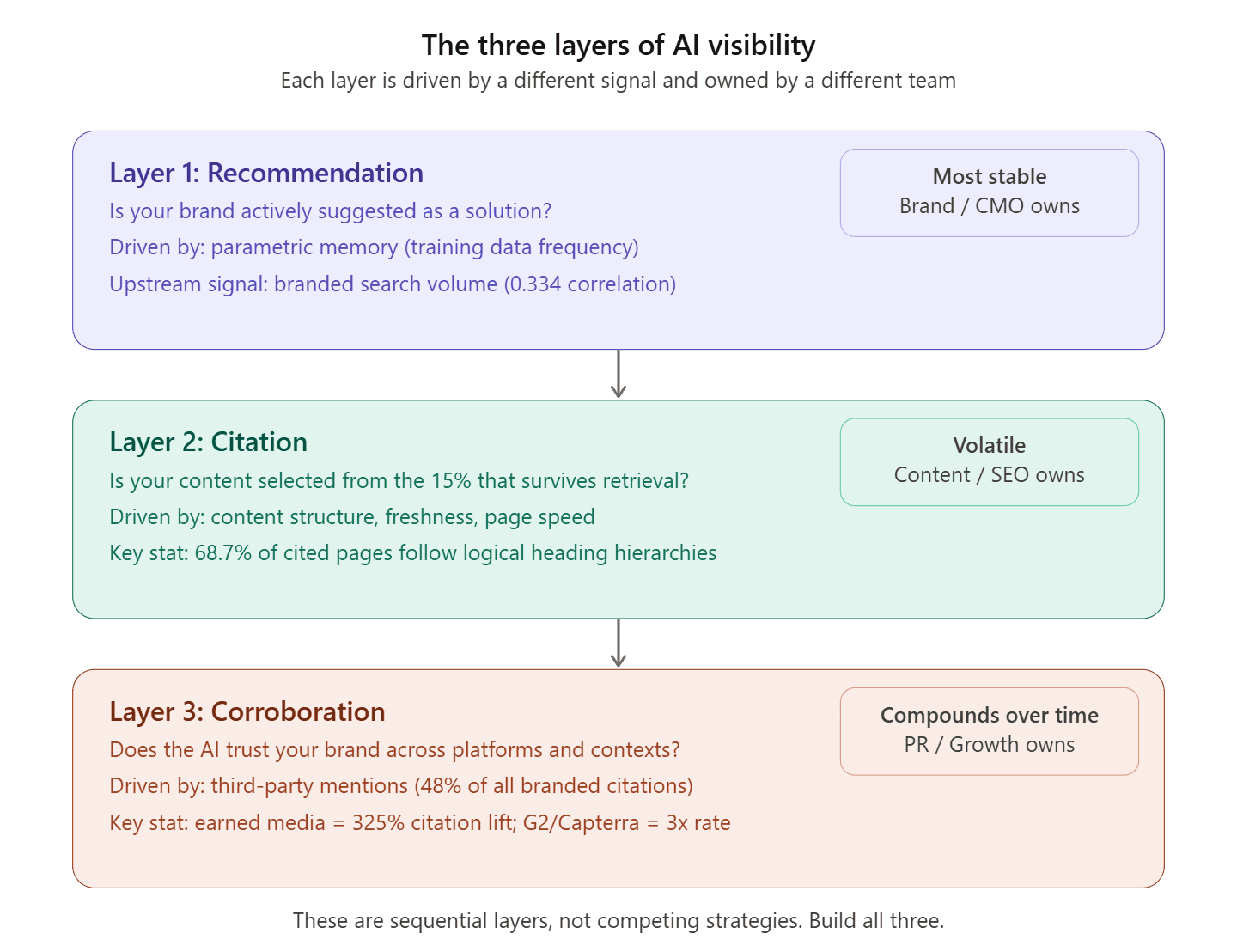

Our position

All three camps are partially right. The error is treating them as competing strategies when they are actually sequential layers.

Brand authority determines whether the LLM recommends you at all (parametric memory). Content extractability determines whether your content gets selected from the retrieval set (only 15% of retrieved pages make the cut). Earned media determines whether the AI has enough independent corroboration to trust your brand across different contexts and platforms.

The companies achieving consistent AI visibility are not choosing one of these three levers. They are building all three simultaneously, while measuring the right signals at each layer. That is the strategic shift this research demands: from tracking citation outputs (which are noisy and unreliable) to building the upstream inputs that compound across every AI platform.

What Informed B2B Brands Are Doing Instead

The research does not say "stop measuring AI visibility." It says "stop measuring it the way most tools measure it." Here is what the data supports.

Replace ranking position with visibility percentage

SparkToro's data is clear: ranking position is noise, but visibility percentage (frequency of appearance across 60-100+ prompt runs) is a statistically valid signal. The top brands in each category showed stable visibility percentages (55-77%) even though individual list composition changed every time.

Your measurement system should report: "Our brand appears in 62% of category-relevant AI responses across three platforms, up from 48% last quarter." It should not report: "We rank #3 on ChatGPT."

Separate citations, mentions, and recommendations

These are three distinct outcomes driven by different mechanisms:

Citations (URL linked as a source): Driven by real-time retrieval. Sensitive to content structure, freshness, and page speed. Volatile. Your content team owns this.

Mentions (brand named in the response text, no link): Driven by a mix of parametric memory and retrieval. More stable than citations but still variable. Your brand and PR teams own this.

Recommendations (brand actively suggested as a solution): Driven primarily by parametric memory (training data frequency) and corroborated by third-party sources. The most valuable and the most stable. Your earned media and brand teams own this.

Ghost citations (cited but not recommended) are a warning sign, not a success metric. If your citation count is rising but your recommendation rate is flat, your content is being used as reference material for competitors.

Measure across platforms, not on one

With only 11% cross-platform overlap, a single-platform score is misleading. Superlines found the same brand can see 615x citation volume difference between Grok and Claude. At minimum, track ChatGPT, Google AI (Overviews and Mode), and Perplexity separately. Each platform has distinct source preferences (Profound 680M citation dataset):

ChatGPT favors Wikipedia (7.8% of all citations, 47.9% of top-10 share) and increasingly LinkedIn

Perplexity favors Reddit (6.6% of total citations, 46.7% of top-10 share) and community-driven content

Google AI Overviews distribute across Reddit (2.2%), YouTube (1.9%), Quora (1.5%), and LinkedIn (1.3%)

Google Gemini shows strong preference for Medium and first-party websites

All platforms favor content published in the last two years (85% of AI Overview citations, per Seer Interactive)

Invest in what compounds

The three upstream investments that every study points to:

Branded search volume: The strongest single predictor of whether an LLM recommends your brand. This is not a new insight. It is the oldest insight in marketing, newly validated by AI research: people who know your name search for your name, and those searches become the training signal that AI systems use to decide who to recommend.

Earned media footprint: Third-party mentions on review platforms (G2, Capterra, Trustpilot), industry publications, Reddit discussions, and LinkedIn thought leadership. Brands with review platform profiles have 3x higher ChatGPT citation rates. Brands with Reddit and Quora presence have 4x higher citation rates. Earned media distribution increases AI citations by up to 325%.

Content extractability: Structured, front-loaded content with clear headings, Q&A formatting, and definitive assertions. 44.2% of all LLM citations pull from the first 30% of content. Content in the bottom 10% of a page earns just 2.4 to 4.4% of citations. Articles between 800 and 1,200 words with clear structure outperform both thin content and unfocused long-form. AirOps' 2026 State of AI Search report found that pages following logical heading hierarchies (H1, H2, H3 in sequence) and using 3+ schema types show 2.8x higher citation rates. Freshness is now table stakes: pages not updated quarterly are 3x more likely to lose citations (AirOps), and GenOptima's monitoring shows citation performance begins declining after just four to five days without content updates. The practical minimum is quarterly content refreshes, with weekly publishing cadence for competitive categories.

The Measurement Framework That Works

Based on the synthesis of all 18 studies, here is the measurement approach supported by the data.

Layer | What to Track | How to Track | Frequency | Owner |

|---|---|---|---|---|

Recommendation rate | How often your brand is actively suggested | 60-100 prompt runs per query set, multi-platform | Monthly | Brand / CMO |

Mention rate | How often your brand is named (with or without links) | Same prompt methodology, separate from citations | Monthly | Brand / PR |

Citation rate | How often your URLs appear as sources | Multi-platform tracking with fan-out query coverage | Monthly | Content / SEO |

"Used" vs. "Cited" split | Whether your content informed the answer (invisible) or was visibly attributed | Peec AI or equivalent tool that distinguishes usage from citation | Monthly | GEO / Content |

Citation source type | Whether citations come from earned media, owned content, or commercial brand content | Categorize citations by source type (Omniscient found 48% earned, 30% commercial, 23% owned) | Quarterly | PR / Content |

Citation accuracy | Whether citations correctly represent your brand | Sample-based manual audit (20-30 citations reviewed for sentiment and accuracy) | Quarterly | Brand / Comms |

Competitive share of voice | Your appearance rate vs. competitors on matched prompts | Head-to-head prompt testing, 60+ runs per competitor | Quarterly | Strategy |

Platform distribution | Which platforms cite/mention you and which do not | Platform-specific tracking (note: 615x variance between platforms for same brand) | Monthly | GEO / Strategy |

Content freshness score | Whether your cited content is within the recency window | Track last-updated dates for cited pages; quarterly refresh minimum (AirOps: 3x citation loss if stale) | Monthly | Content |

Upstream signals | Branded search volume, earned media mentions, review presence | Google Trends, media monitoring, review platform tracking | Monthly | Growth / Brand |

This is more complex than a single "AI visibility score." It should be. The phenomenon being measured is more complex than traditional search rankings. Any tool that reduces this to a single number is discarding the signal that matters.

Research Methods

This study synthesizes published findings from 25+ major research efforts. No original data was collected. All sources are cited with links to original publications.

Primary sources: SparkToro & Gumshoe (2,961 prompts, 600 volunteers), Seer Interactive (541,213 responses, 20 brands), AirOps fan-out study (548,534 pages, 15,000 prompts), AirOps 2026 State of AI Search report, Nature Communications peer-reviewed study (800 questions, 58,000 pairs), Ahrefs (millions of SERPs), MIT ICLR 2026, Kevin Indig/Growth Memo (1.2M ChatGPT responses), Semrush LinkedIn study (325,000 prompts, 89,000 URLs), Semrush 13-week domain study (230,000+ prompts), Profound citation patterns (680M citations), Profound LinkedIn analysis (1.4M citations), Tinuiti Q1 2026 (9 verticals, 7 platforms), Omniscient Digital/Peec AI (23,387 citations), Writesonic AIO study (1M+ AI Overviews), Superlines (60+ data points, 615x platform variance), Similarweb GenAI Brand Visibility Index (113 brands, 6 sectors), GenOptima freshness monitoring, Conductor 2026 benchmarks, SE Ranking (2.3M pages), Authoritas, Digital Bloom, Princeton/Georgia Tech SIGKDD 2024, Stacker, and BrightEdge.

Limitations

All third-party citation data is modeled and estimated, not directly measured from platform internals. Several sources (AirOps, Profound, Seer, Semrush, Peec AI, Writesonic, Superlines, GenOptima) sell AI tracking tools or services, creating potential incentive bias. The field evolves at extreme speed; findings from late 2025 may already reflect outdated platform behaviors. No study has yet demonstrated a reliable causal link between citation metrics and revenue or pipeline outcomes. Profound and Peec AI data relies on synthetic prompt baskets and API-based queries, which may differ from real user interface behavior.

About This Research

This study was produced by Passionfruit Labs. Passionfruit is a B2B SEO, GEO, and AI visibility agency that helps SaaS companies build measurable presence across traditional search and AI discovery channels. We sell AI visibility tracking and optimization services. It is in our financial interest to argue that AI visibility measurement requires sophisticated, multi-layered approaches rather than simple citation counting tools.

We disclose this because the research demands it. Every study cited here is publicly accessible. Every methodology is described. We encourage independent verification and welcome disagreement.

Key Sources

SparkToro & Gumshoe.ai (Jan 2026). AI Brand Recommendation Inconsistency Study.

Seer Interactive (Mar 2026). LLM Ghost Citations: Why Your Content Is Working and Your Brand Isn't.

AirOps (Mar 2026). The Influence of Retrieval, Fan-out, and Google SERPs on ChatGPT Citations.

AirOps (2026). The 2026 State of AI Search: How Modern Brands Stay Visible.

Venkit et al. (Apr 2025). SourceCheckup. Nature Communications.

Huang, Shen, Wei, Broderick (Feb 2026). LLM Ranking Platform Fragility. MIT / ICLR 2026.

Indig, K. (Mar 2026). The Science of How AI Picks Its Sources. Growth Memo / Gauge.

Semrush (Jan-Feb 2026). LinkedIn AI Visibility Study: 89,000 URLs Analyzed.

Semrush (Oct-Dec 2025). The Most-Cited Domains in AI: A 3-Month Study.

Profound (Aug 2024-Jun 2025). AI Platform Citation Patterns: 680M Citations.

Profound (Nov 2025-Feb 2026). LinkedIn: The Most-Cited Domain for Professional Queries.

Tinuiti (Q1 2026). AI Citation Trends Report: 9 Verticals, 7 Platforms.

Omniscient Digital / Peec AI (Jan 2026). How LLMs Source Brand Information: 23,387 Citations.

Writesonic (Aug 2025). AI Citations From SERP Results: 1M+ AI Overviews Analyzed.

Superlines (Mar 2026). AI Search Statistics 2026: 60+ Data Points.

Similarweb (Jan 2026). 2026 GenAI Brand Visibility Index: 113 Brands, 6 Sectors.

GenOptima (Mar 2026). AI Brand Visibility Report: Content Freshness Decay Data.

Conductor (2026). AI Search Benchmarks: Referral Traffic and Growth Rates.

Ahrefs (Nov-Dec 2025). AI Overview Frequency, CTR, and Citation Overlap.

SE Ranking (Nov 2025). Domain Authority, Review Platforms, and ChatGPT Citations.

Authoritas (Dec 2025-Feb 2026). AI Visibility Concentration Tracking.

Digital Bloom (Dec 2025). 2025 AI Citation and LLM Visibility Report.

Aggarwal et al. (2024). Generative Engine Optimization. Princeton/Georgia Tech, SIGKDD 2024.

Stacker (Dec 2025). Earned Media Distribution and AI Citation Lift.

BrightEdge (2025-2026). Structured Data, Author Schema, and AI Citation Rates.

Seer Interactive (Jan 2026). Using GEO to Address Brand Misconceptions.

Lewis et al. (2020). Retrieval-Augmented Generation. arXiv:2005.11401.

What To Do Next

This research exists to help you make better decisions about where your AI visibility budget goes. Here are three ways to act on it, depending on where you are today.

1. Audit your current AI visibility measurement

Before changing strategy, find out whether your current data is trustworthy. Run this 30-minute check:

Pick your top 5 category-level prompts (the questions your buyers actually ask AI, not branded queries). Run each prompt 10 times on ChatGPT and 10 times on Perplexity. Record which brands appear, in what order, and whether your brand is cited, mentioned, or recommended.

If the results vary dramatically across runs, your tracking vendor's single-snapshot data is not telling you what you think it is. If your brand is cited but never recommended, you have a ghost citation problem. If you appear on one platform but not the other, you have a platform coverage gap.

We built a free prompt testing template for this exercise. It includes 20 pre-written B2B SaaS category prompts, a scoring rubric for citation vs. mention vs. recommendation, and a comparison sheet for multi-platform results. Request it at getpassionfruit.com/contact-us.

2. Get a Passionfruit AI Visibility Assessment

If the audit confirms gaps, we can go deeper. Passionfruit runs a full AI visibility assessment across ChatGPT, Perplexity, Gemini, Google AI Overviews, and AI Mode for your brand and up to three competitors. The assessment covers:

Visibility percentage across 60+ prompt runs per query (the statistical minimum SparkToro's research demands)

Citation, mention, and recommendation rates tracked separately (the distinction Seer's ghost citation research proved is essential)

Platform-by-platform source analysis (which third-party sites are driving your AI presence, following the Omniscient/Peec AI methodology)

Content extractability scoring based on the structural signals AirOps' research identified (heading hierarchy, schema usage, freshness, front-loading)

A prioritized action plan mapping to the three upstream layers: brand authority, content extractability, and earned media footprint

This is not a dashboard. It is a diagnostic with specific recommendations your team can execute in 90 days. Talk to an expert →

3. Subscribe to Passionfruit Labs research

This study is the second in a series. The first, "Branded Search Is the Most Undervalued Signal in SaaS Marketing," examined why branded search volume is structurally protected from AI disruption. Future research will cover:

The Ghost Citation Playbook: How to convert citations into recommendations (original data from Passionfruit client engagements)

Platform-Specific GEO Benchmarks for B2B SaaS: What "good" looks like on each AI platform for companies at $10M-$500M ARR

The Earned Media Multiplier: Quantifying the AI citation lift from specific PR, review, and Reddit strategies

Each study follows the same methodology: named sources, transparent data, disclosed biases, and actionable frameworks. No gated PDFs. No email-wall nonsense. Published on the Passionfruit blog for the industry to use, challenge, and build on.

Passionfruit Labs | April 2026

This research was written by [Your Name], [Your Title] at Passionfruit. For questions, corrections, or to discuss your AI visibility strategy, reach us at getpassionfruit.com/contact-us.

If this research changed how you think about AI measurement, share it with a peer who is still tracking AI ranking positions. That is the fastest way to raise the bar for the entire industry.