Your Brand Shows Up Differently on Every AI Platform. Here's What 11 Million citations tell for Your Content Strategy

We tracked 11.2 million AI citations across ChatGPT, Claude, Gemini, and Perplexity over seven months. The biggest surprise was not how AI search works. It was how differently each platform decides what to show.

Passionfruit Research | March 2026

The short version

We sent 11.2 million individual citations to four major AI platforms every month for seven months and counted every source they cited in their answers. Here is what we found.

AI platforms mostly agree on what topics deserve sources in their answers, but they disagree wildly on how many sources to include. 78% of queries got cited by three or more platforms, but when all four platforms answered the same question, the platform that cited the most included 4.4x more sources than the one that cited the least. Your brand could be heavily featured on one platform and nearly invisible on another for the exact same question.

Perplexity mentions the most brands. Claude mentions fewer brands but gives them far more visibility when it does. Perplexity included sources in its answers for 95% of all queries we tracked, while Claude only did so for 64%. But when Claude did cite sources, it averaged 275 per query compared to Perplexity's 209. These are two very different games, and your content strategy needs to account for both.

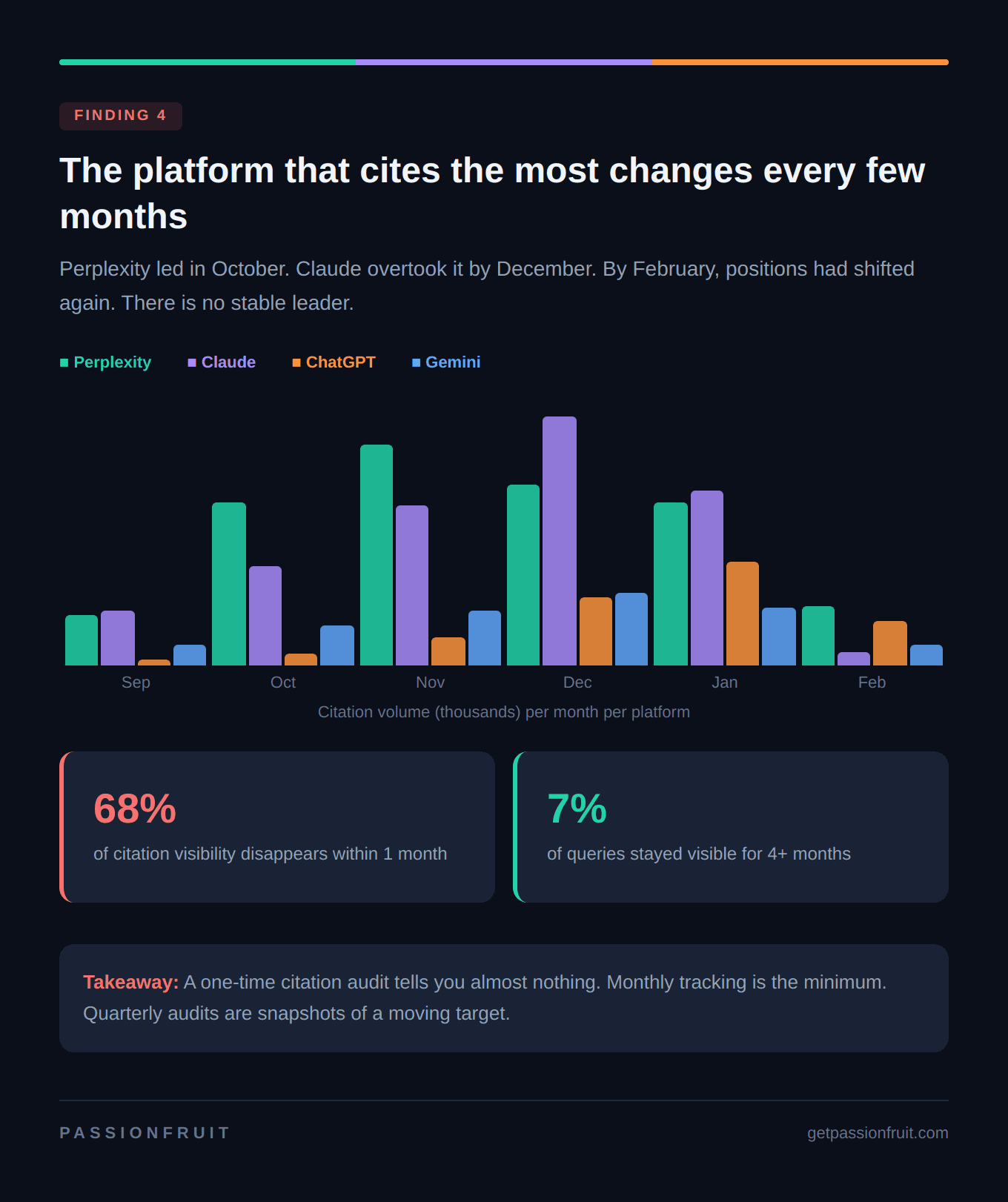

Most of the time, your AI visibility disappears within a month. 68% of queries that generated citations in one month did not generate them the next month. Only 7% of queries stayed visible for four months or longer. If you measured your AI search performance in January and assumed it would hold through March, you were likely wrong.

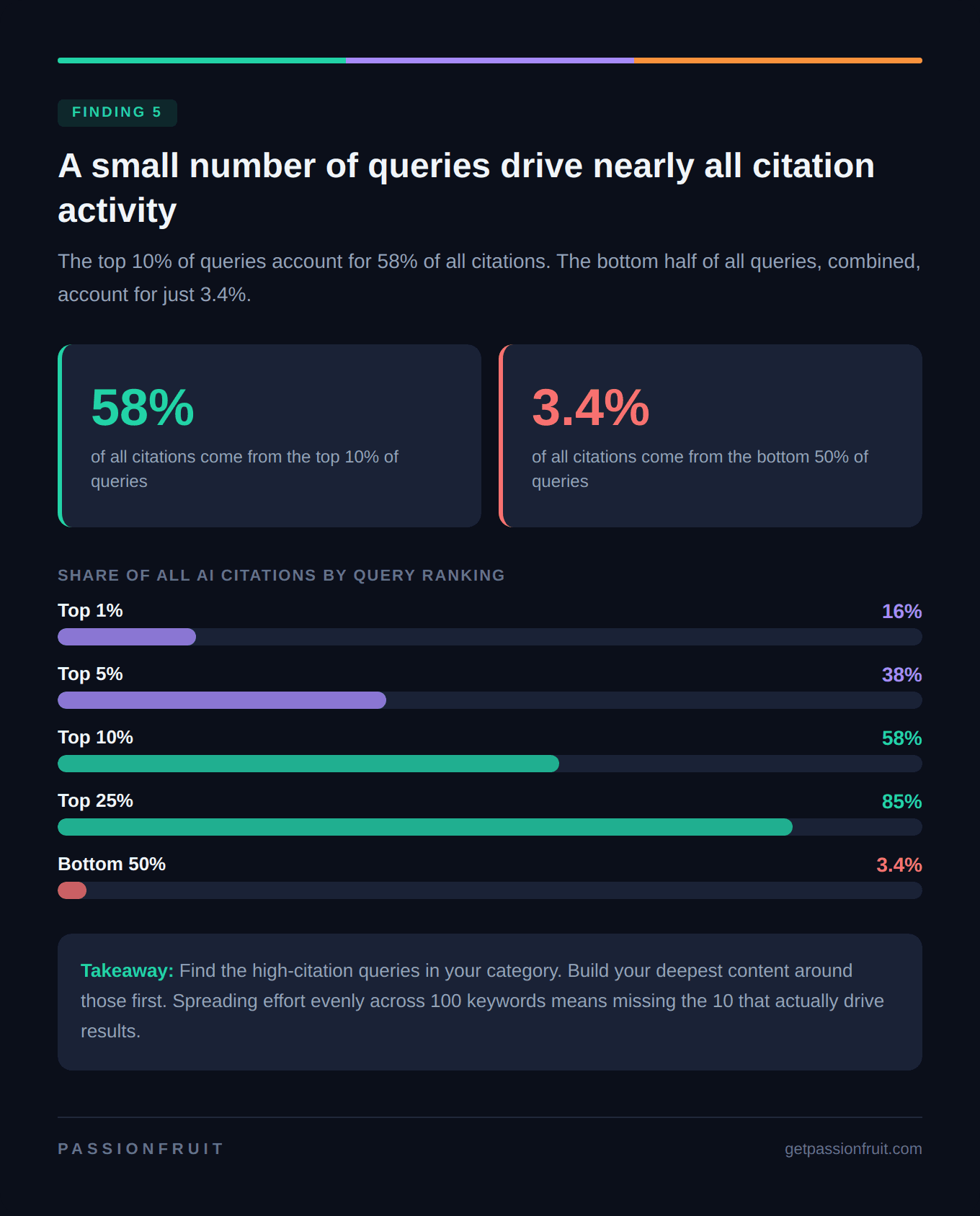

A small number of high-value queries drive almost all the citation activity. The top 10% of queries accounted for 58% of all citations across every platform. The bottom half of queries, combined, accounted for just 3.4%. Not every keyword is worth optimizing for AI search. The opportunity is concentrated, and spreading your effort thin means missing it.

Why marketing teams should care about this

When someone asks ChatGPT "what's the best CRM for small businesses" or searches Perplexity for "how to reduce cart abandonment," these AI platforms do not simply pull up a list of links the way Google does. They run a much more involved process behind the scenes, and understanding that process is the key to showing up in AI answers.

How AI platforms actually build their answers

Here is the part most marketing teams have not caught up with yet.

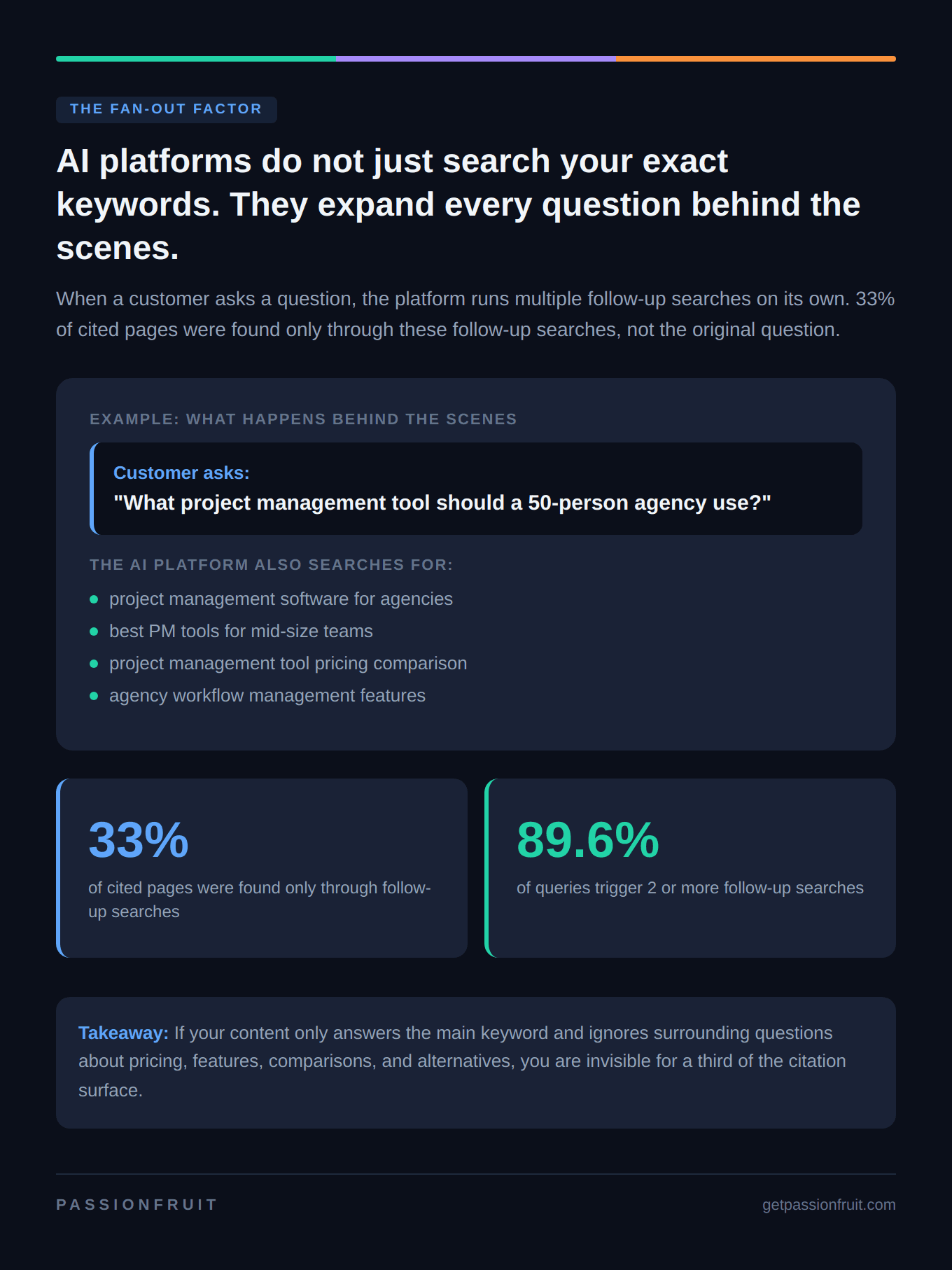

When you type a question into ChatGPT or Perplexity, the platform does not just search for your exact words. It breaks your question apart and runs multiple follow-up searches on its own. The industry calls this "query fan-out," but the concept is simpler than the name suggests.

Say a potential customer asks Perplexity: "What project management tool should a 50-person agency use?"

The platform does not just search for that exact phrase. It might also search for "project management software for agencies," "best PM tools for mid-size teams," "project management tool pricing comparison," and "agency workflow management features." Each of those follow-up searches pulls in different websites, different brands, and different content. The platform then reads through everything it found, picks the sources it trusts most, and weaves them into a single answer with citations.

And here is where our research adds a new layer: each platform runs this process differently. ChatGPT, Claude, Gemini, and Perplexity do not fan out the same way, do not retrieve the same pages, and do not pick the same sources to cite. A brand can be well-covered in Claude's follow-up searches and completely absent from Perplexity's, or vice versa.

That is the gap this study is designed to measure.

How we ran this study

We tracked citation activity across four AI platforms (ChatGPT, Claude, Gemini, and Perplexity) every month from August 2025 through February 2026.

In total, we recorded 11.2 million individual citations over the seven-month period.

We did not filter or exclude any queries based on topic. The goal was to understand how AI citation behavior works in aggregate, across the full range of questions real users actually ask.

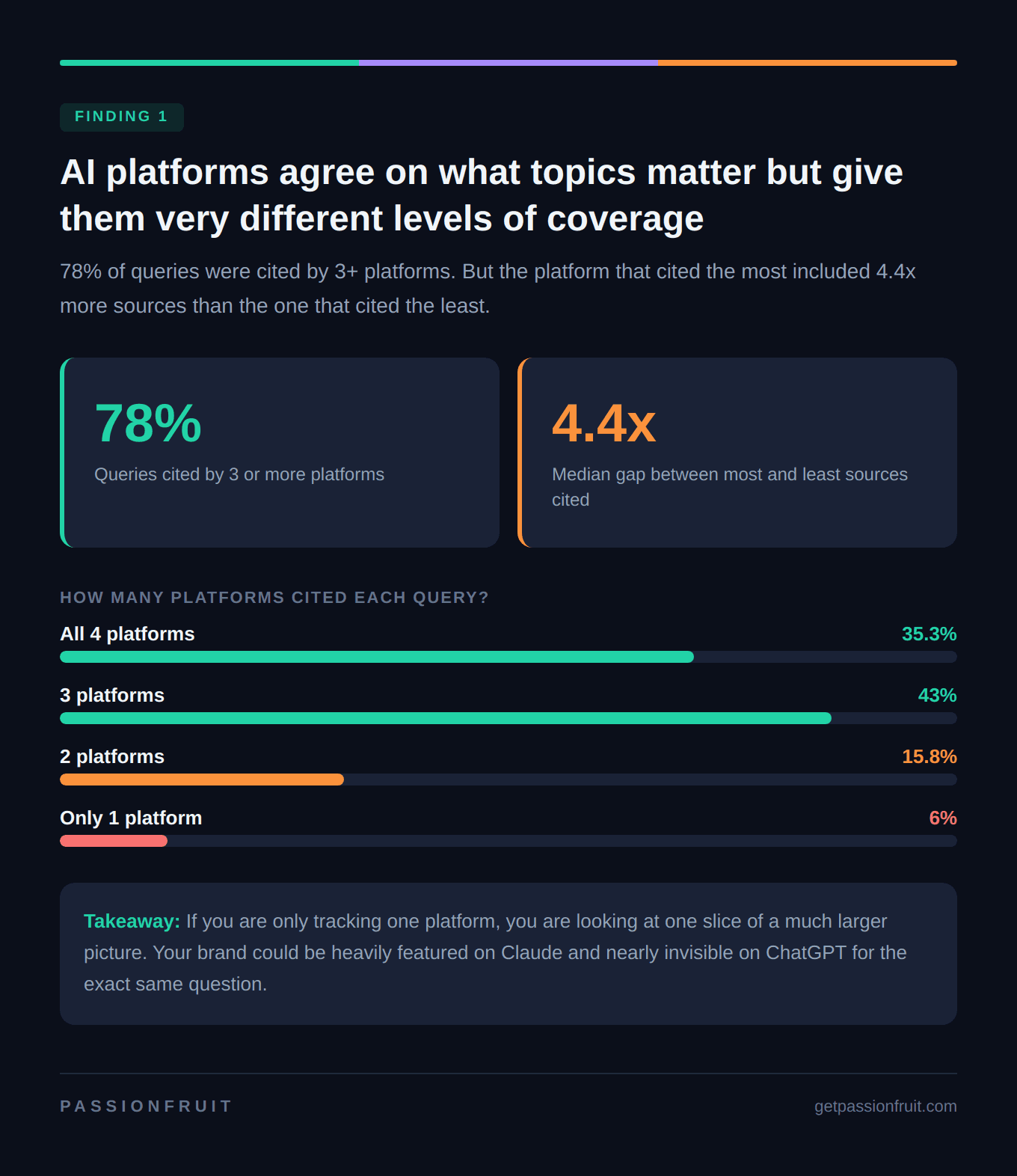

Finding 1: All four platforms agree on what topics matter, but they give them very different levels of coverage

The first thing we wanted to know was straightforward: when you ask the same question on different AI platforms, do they cite the same kinds of sources?

Broadly, yes. 78% of queries in our dataset were cited by three or more platforms, and 35% were cited by all four. If a topic is worth citing on one platform, it is almost certainly worth citing on the others too. The platforms are reading from a broadly similar understanding of what questions deserve sourced answers.

But that is where the agreement ends.

When we looked at the queries where all four platforms generated citations, the gap between the most generous and the least generous platform was enormous. The platform that cited the most included a median of 4.4x more sources than the platform that cited the least, and for one in ten queries that gap stretched to 12.7x or higher.

This is not a minor difference in formatting. It means that for the same customer question, your brand might appear in a dozen cited sources on one platform and show up once or not at all on another.

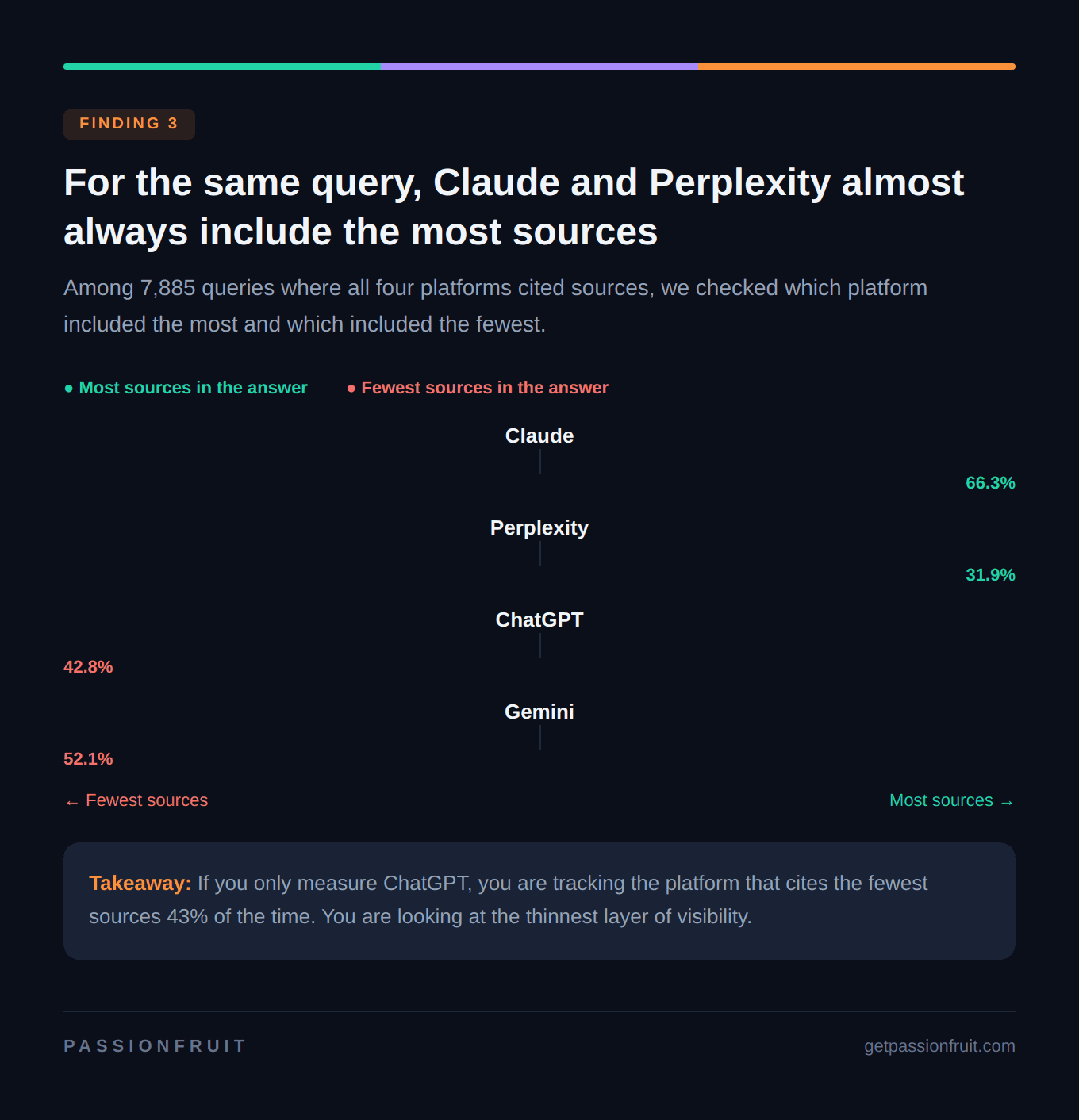

The pattern has a clear direction, too. Claude was the most generous citer for 66% of shared queries, and Perplexity was the most generous for 32%. ChatGPT and Gemini combined were the most generous citer in less than 2% of cases. On the flip side, Gemini was the least generous citer 52% of the time, and ChatGPT was the least generous 43% of the time.

What this means for your team: If you are only tracking your brand's visibility on ChatGPT, you are measuring the platform that cites the fewest sources for a given query almost half the time. You are looking at the thinnest layer of a much deeper citation surface. Cross-platform tracking is not a nice-to-have anymore. It is the only way to see the real picture.

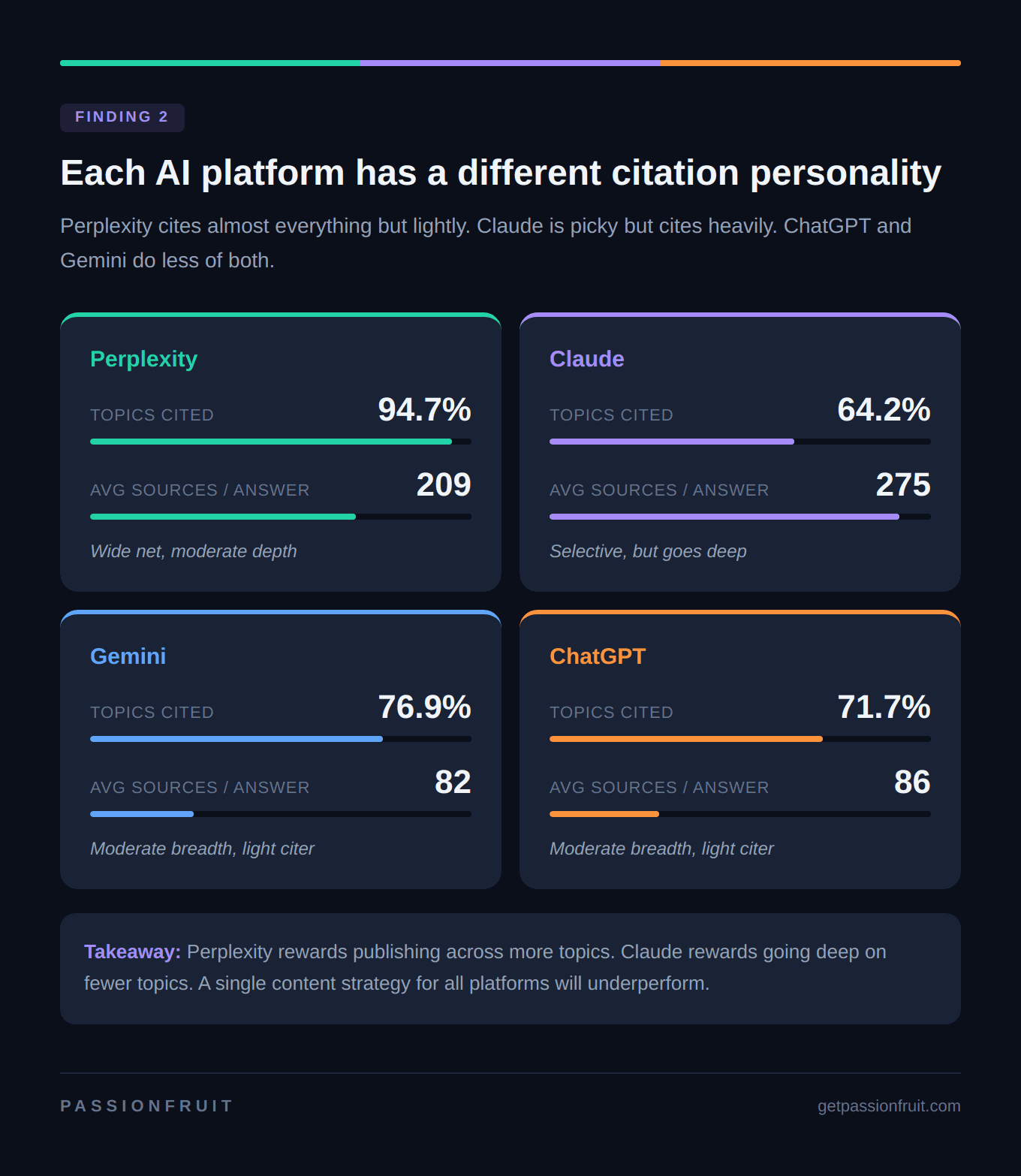

Finding 2: Perplexity and Claude play completely different games, and your strategy needs to account for both

Each platform has what amounts to a citation personality, and no two are alike.

Perplexity is the broadest citer. It generated at least one citation for 95% of all queries in our dataset. If your brand publishes content on a topic, Perplexity is very likely to find it and include it somewhere in its answers. Its average citation count per query is 209, and it behaves like a platform that wants to give users as many relevant sources as possible across a wide range of topics.

Claude is the most selective but the most intense. It only generated citations for 64% of queries, the lowest of any platform, but when it did engage, it averaged 275 citations per query, the highest by far. Claude behaves like a platform that ignores most topics but, when it decides a question is worth answering with sources, goes deep.

ChatGPT and Gemini sit in the middle on both dimensions. ChatGPT generated citations for 72% of queries with an average of 86 per query. Gemini covered 77% with an average of 82. Both are moderate in how many topics they cite and moderate in how many sources they include, which means individual brands get a thinner signal from these platforms on any given query.

We also measured how much overlap exists between what different platforms choose to cite. Gemini and Perplexity had the highest overlap, with 78% of the topics cited by either platform being cited by both, which makes sense because they are the two broadest citers. Claude and ChatGPT had the lowest overlap at just 44%, meaning they agree on which topics to cite less than half the time, even though both are leading large language models.

What this means for your team: The content strategy that works for Perplexity is not the same as the one that works for Claude. Perplexity rewards breadth: publish content across more topics in your category, and you are more likely to earn citations. Claude rewards depth: go deep on a narrower set of high-value topics, and when Claude engages, your brand gets significantly more visibility. ChatGPT and Gemini are somewhere in between. A single-strategy approach that treats all four platforms the same will underperform a strategy that accounts for these differences.

Finding 3: AI citation visibility is not like a ranking you hold

This was the finding that surprised us most.

In traditional SEO, when your page reaches position 3 for a keyword, it tends to stay in that general neighborhood for weeks or months. Rankings move, but they move gradually. Most marketing teams have built their measurement cadence around that stability: check rankings monthly or quarterly, flag anything that drops significantly, and adjust.

AI citation visibility does not work that way.

Across our full dataset, 68% of queries that generated citations in one month did not generate them the following month. Only 25% of queries stayed cited for two to three months. Just 7% lasted four months or longer. And fewer than 50 queries out of 22,363, less than 0.2%, remained cited for six or all seven months.

This volatility was consistent across platforms. Every platform showed high month-to-month fluctuation, including ChatGPT, which was the most stable of the four but still showed substantial swings.

The platform that cited the most sources also shifted over time. In October 2025, Perplexity was the overall citation leader. By December, Claude had overtaken it. By February 2026, Perplexity was back on top while Claude's volume dropped sharply. There is no stable leader.

What this means for your team: A one-time AI citation audit tells you almost nothing about your ongoing visibility. The brand that looks well-represented in January may be nearly absent by March, not because their content got worse, but because the platforms' citation behavior shifted. Monthly tracking is the minimum cadence that makes sense. Quarterly audits are essentially snapshots of a moving target.

Finding 4: Most of the citation opportunity is concentrated in a small number of high-value queries

Not every query your audience might ask is equally valuable for AI citation visibility, and the data makes the imbalance hard to ignore.

The top 1% of queries in our dataset accounted for 16% of all citation activity. The top 10% accounted for 58%. The top 25% accounted for 85%. Meanwhile, the entire bottom half of queries, combined, produced just 3.4% of all citations.

This concentration held across every platform individually. Whether we looked at Perplexity, Claude, ChatGPT, or Gemini, the top 10% of queries consistently drove somewhere between 49% and 58% of that platform's total citations. The pattern is not a quirk of one platform. It is a structural feature of how AI citation works.

Think about what that means practically. If your team is spreading content effort evenly across 100 target queries for AI search, roughly 10 of those queries are generating the vast majority of all citation activity, and the other 90 are contributing almost nothing to your overall visibility. The math overwhelmingly favors finding and owning those top queries rather than trying to cover everything.

What this means for your team: Before you build a content calendar for AI visibility, identify the high-citation queries in your category. These are the queries where the platforms consistently include the most sources in their answers, across platforms and across months. Build your deepest, most authoritative content around those queries first. Long-tail queries still matter for traditional SEO and for building topical authority, but for the specific goal of earning AI citations, concentration beats coverage.

What this changes about how you plan content for AI search

Everything we found points to four shifts that marketing teams need to make.

Stop tracking one platform and assuming it represents the whole picture. Perplexity and Claude generate the most citations but in completely different ways: one rewards breadth, the other rewards depth. ChatGPT and Gemini cite fewer sources per answer, making them easy to overlook even though they serve a large share of users. If you are only measuring one platform, you are flying with one eye closed.

Treat AI visibility as a monthly signal, not a quarterly position. Two-thirds of citation visibility disappears within a month, and the platform that leads one month may trail the next. Measure monthly at minimum, and do not assume that a single good report means your visibility is locked in.

Focus your AI content investment on the queries that actually drive citations. The top 10% of queries generate nearly 60% of all citation activity. Identify those queries in your category, build comprehensive content around them, and keep that content fresh. Spreading resources evenly across every possible query leaves citation value on the table.

Account for how AI platforms expand your customers' questions behind the scenes. When someone asks an AI platform a question, the platform does not just search for those exact words. It generates follow-up searches around pricing, features, comparisons, and related topics, and a third of citation opportunities come from those follow-up searches alone. If your content only covers the primary keyword and does not address the surrounding questions, you are invisible for a significant share of the citation surface. Build content that answers not just the main question but the related questions your customers would naturally ask next.

About this research

This analysis was conducted by Passionfruit, an AI-native SEO and generative engine optimization agency. The underlying dataset covers 22,363 queries tracked across four AI platforms over seven months, totaling 11.2 million citation events.

For questions about the methodology or to discuss how these findings apply to your brand's AI search strategy, reach out to our team.